Navigating the AWS European Sovereign Cloud: Practical Strategies for a Multi-Partition Future

Abstract

The AWS European Sovereign Cloud (ESC) represents a fundamental shift in cloud computing for EU public sector and regulated industries. ESC is an independent AWS partition, physically and logically separate from the commercial partition, operated entirely within the EU to address digital sovereignty requirements. This whitepaper provides a practical framework for organizations adopting ESC through a strategic dual‑partition approach that combines ESC sovereignty with commercial AWS capabilities.

We examine the architectural implications of partition boundaries, control plane separation, and the operational complexities of managing infrastructure across multiple AWS partitions. Through detailed analysis of connectivity patterns, identity federation, and compliance mapping, this paper presents proven strategies for navigating ESC adoption whilst maintaining operational excellence.

Key takeaways: ESC solves sovereignty challenges but introduces operational complexity; dual‑partition strategies offer targeted sovereignty with retained innovation velocity; success requires deliberate operating models and partition‑aware tooling from day one.

1. Introduction

The European regulatory landscape increasingly demands data sovereignty, operational autonomy, and governance structures that align with EU values and oversight. Digital sovereignty has evolved from a policy aspiration to a technical requirement, particularly for public sector entities and organizations operating in highly regulated industries such as financial services, healthcare, and critical infrastructure.

The AWS European Sovereign Cloud (ESC) addresses these requirements through a new AWS partition that is operated, governed, and controlled entirely within the EU. Launched in January 2026, ESC provides complete independence of control planes, metadata handling, and operational oversight that goes beyond regional data residency features within the commercial partition. This creates both opportunities and challenges for organizations seeking to leverage cloud computing whilst meeting stringent sovereignty requirements.

This paper provides practical engineering patterns and operating models for ESC adoption and multi‑partition environments. We examine the technical implications of partition boundaries, present proven integration patterns, and offer guidance for organizations navigating the complexity of operating across multiple AWS partitions whilst maintaining operational excellence and innovation velocity.

This document is informational and not legal advice. Engage legal and compliance experts for interpretations of regulatory obligations.

Summary:

- ESC addresses EU sovereignty requirements through independent partition operation

- Multi‑partition strategies introduce complexity but enable targeted sovereignty

- Practical patterns exist for managing identity, connectivity, and operations across partitions

- Success requires deliberate operating models and partition‑aware architectural decisions

2. AWS Partitions

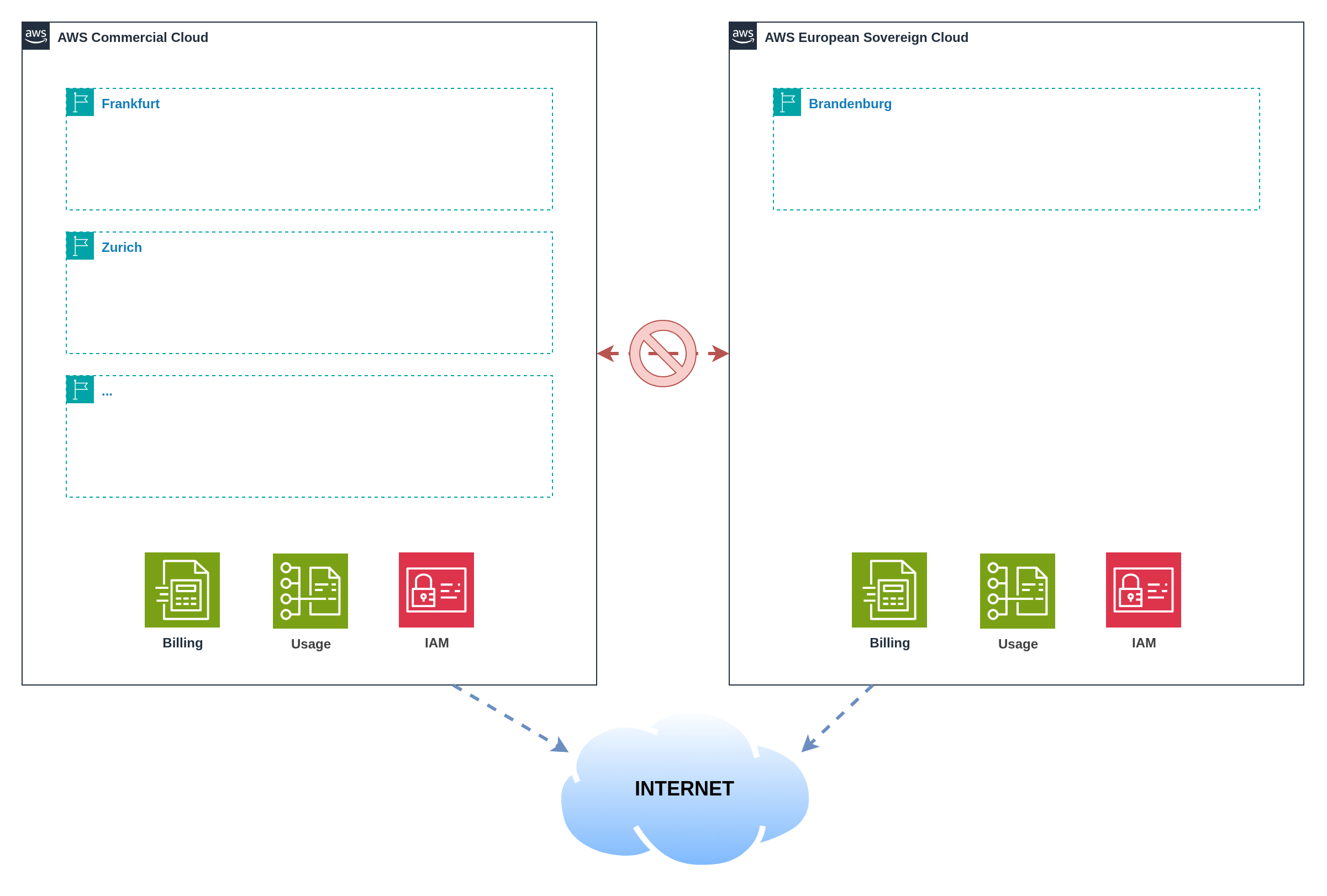

Figure 1: Partition model and control‑plane boundaries

Figure 1: Partition model and control‑plane boundaries

AWS partitions represent fundamentally separate cloud environments with independent control planes, billing systems, and operational boundaries. The global commercial partition serves most customers worldwide, whilst specialised partitions like AWS GovCloud (US) and the upcoming ESC address specific regulatory and sovereignty requirements.

ESC operates with complete separation from other partitions, including its own identity and access management system, billing infrastructure, and service control planes. This comprehensive separation ensures that all administrative actions, metadata, and operational telemetry remain within ESC's EU governance domain. For ESC, this means customer content, customer‑created metadata (including IAM roles, resource tags, and configuration data), and operational oversight remain entirely under EU control with no shared components or cross‑partition dependencies.

The implications extend beyond data residency. Organizations must establish new AWS accounts within each partition, as accounts cannot span partition boundaries. Service availability, feature roadmaps, and ecosystem maturity may differ between partitions. Support boundaries align with partition operations, meaning ESC support is provided by EU‑resident staff operating under EU governance structures.

Tooling and automation must be partition‑aware. APIs, CLI configurations, and Infrastructure as Code templates require specific endpoints and credentials for each partition. Cross‑partition resource references are impossible; integration occurs through application‑level connectivity patterns or shared external systems.

| Partition | Identifier | Primary Use Case | Geographic Scope |

|---|---|---|---|

| Commercial | aws | Global commercial cloud services | Worldwide (excluding China) |

| China | aws-cn | China-specific cloud services operated by local partners | China mainland |

| GovCloud (US) | aws-us-gov | US federal government and regulated industries | United States |

| ESC | aws-eusc | EU sovereignty and regulatory compliance | European Union |

The AWS European Sovereign Cloud launches with the initial region Brandenburg (eusc-de-east-1) in Germany. This represents the first EU-sovereign AWS region with complete operational independence from commercial AWS partitions.

ESC uses a distinct domain namespace (*.amazonaws.eu) separate from commercial AWS (*.amazonaws.com), reinforcing the complete partition separation at the API and service endpoint level.

The partition identifier forms part of every Amazon Resource Name (ARN), enabling precise resource identification within the appropriate governance domain:

arn:partition:service:region:account:resource

ARN Examples across partitions:

# Commercial partition

arn:aws:iam::123456789012:role/my-role

# GovCloud partition

arn:aws-us-gov:iam::123456789012:role/my-role

# ESC partition (anticipated format)

arn:aws-eusc:iam::123456789012:role/my-role

Summary:

- Partitions provide complete control plane separation and independent governance

- Cross‑partition account sharing is impossible; new accounts required per partition

- Service availability and ecosystem maturity may differ between partitions

- Tooling must be explicitly designed for partition‑aware operations

3. Why ESC Exists and What It Solves

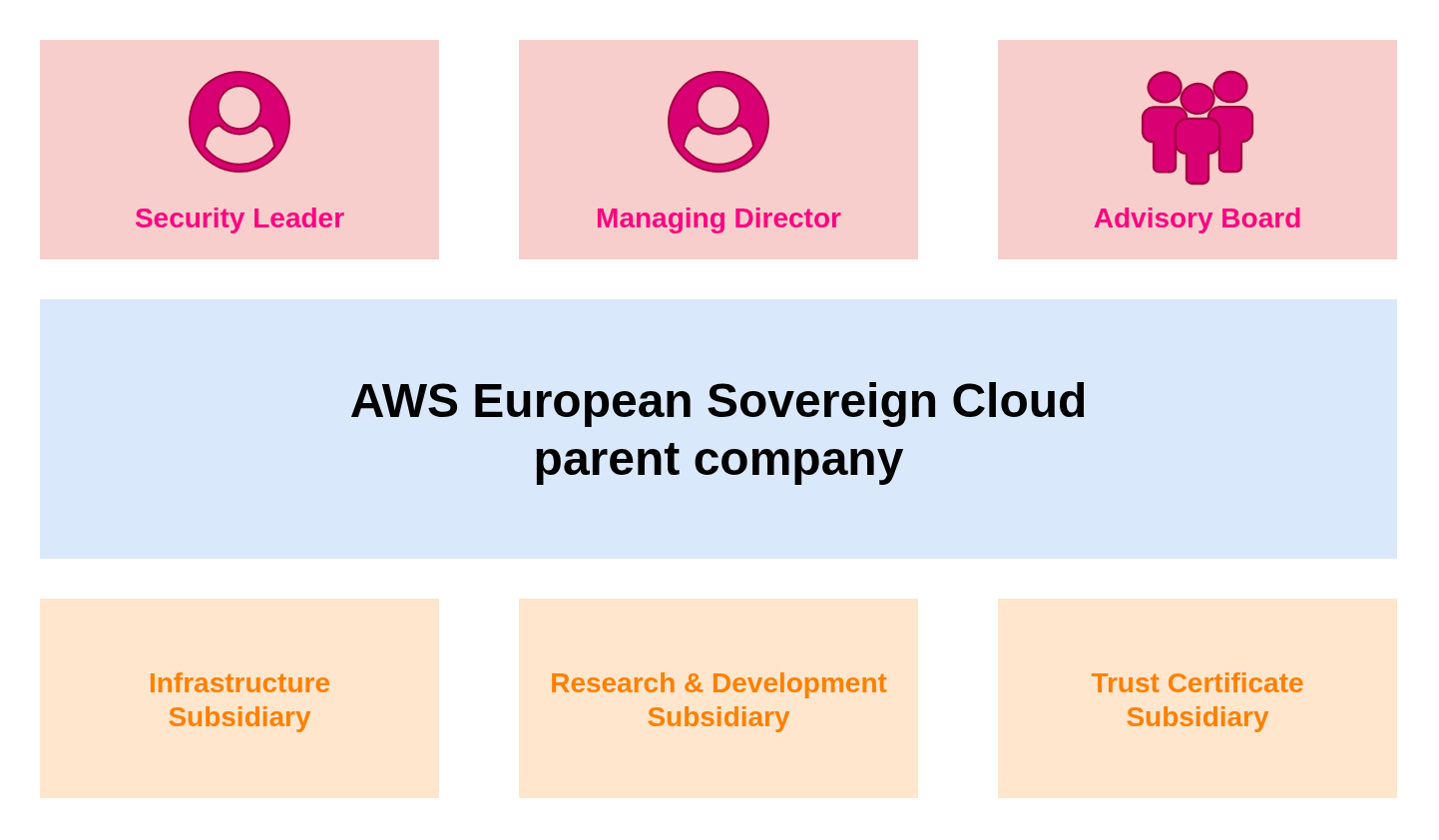

The AWS European Sovereign Cloud (ESC) addresses three primary challenges facing EU organizations: data residency requirements that extend beyond customer content to include metadata and operational telemetry; operational autonomy ensuring that cloud infrastructure management occurs within EU governance frameworks; and EU‑centric oversight providing independent governance aligned with European values and regulatory expectations.

ESC delivers these benefits through EU‑based operations with staff residing in and operating from EU member states. Customer content and customer‑created metadata remain within EU borders, including IAM configurations, resource tags, CloudTrail logs, and billing data. An independent governance structure provides European oversight of operations, security practices, and business decisions affecting ESC customers.

AWS Staff Requirements: ESC infrastructure is operated exclusively by EU‑resident AWS personnel under EU governance. This includes data centre operations, support escalations, security monitoring, and infrastructure management.

Customer Location Flexibility: ESC customers can be located anywhere globally. A US‑based multinational corporation, Asian financial institution, or Australian government agency can all use ESC to meet EU sovereignty requirements for their European operations, data processing, or regulatory compliance needs. Customer location, citizenship, or corporate domicile do not restrict ESC access.

The Value Proposition: ESC enables global organizations to leverage EU‑sovereign cloud infrastructure regardless of their headquarters location, providing EU data residency and governance without requiring the customer organisation itself to be EU‑based.

Figure 2: AWS European Sovereign Cloud (ESC) Governance Overview

Figure 2: AWS European Sovereign Cloud (ESC) Governance Overview

The trade‑offs are significant. Organizations start from scratch and must rebuild their AWS foundation within the ESC partition. Service parity will evolve gradually, with initial ESC regions offering core services whilst niche capabilities and newest instance types arrive later. Pricing and procurement processes may differ from commercial AWS. Geographic redundancy is initially limited until multiple ESC regions become available, creating disaster recovery considerations that don't exist in the mature commercial partition.

Organizations must weigh stronger sovereignty posture against reduced service breadth, potentially higher costs, and operational complexity. The decision requires careful analysis of regulatory requirements, service dependencies, performance needs, and operational readiness for managing multi‑partition environments.

| Benefits | Trade‑offs |

|---|---|

| EU‑based operations and governance | Start from scratch and rebuild infrastructure |

| Customer content and metadata residency | Evolving service parity and feature availability |

| Independent European oversight | Potentially different pricing and procurement |

| Regulatory compliance assurance | Limited geographic redundancy initially |

| Familiar AWS APIs and tooling | Operational complexity for multi‑partition setups |

3.1 Regulatory Compliance Alignment

ESC addresses specific regulatory frameworks prevalent in EU public sector and regulated industries. Understanding how ESC characteristics map to compliance requirements enables organizations to leverage ESC capabilities effectively whilst maintaining comprehensive compliance postures.

GDPR (General Data Protection Regulation) benefits from ESC's EU‑resident data processing and storage capabilities. Customer content and metadata remain within EU jurisdiction, supporting data protection impact assessments and privacy by design requirements. EU‑resident operations provide direct accountability under European legal frameworks.

NIS2 (Network and Information Systems Directive) requirements for security measures and incident reporting align with ESC's EU‑based security operations and incident response capabilities. Critical infrastructure operators can demonstrate EU‑controlled cybersecurity measures and governance structures that align with national implementation requirements.

DORA (Digital Operational Resilience Act) for financial services benefits from ESC's independent governance and EU‑resident operations. Financial institutions can demonstrate operational resilience through EU‑controlled cloud services whilst maintaining ICT risk management frameworks that align with European supervisory expectations.

Sector‑specific regulations overlay additional requirements that ESC's governance model supports. Healthcare data processing under national implementations of GDPR, defence and security applications requiring national oversight, and public sector applications with citizen data protection requirements all benefit from ESC's sovereignty characteristics.

Artifact collection and evidence generation require partition‑aware approaches. Audit logs, configuration evidence, and compliance reports remain within appropriate jurisdictional boundaries whilst providing comprehensive evidence for regulatory examinations and assessments.

Summary:

- ESC provides complete sovereignty through EU‑based operations and governance

- Benefits include metadata residency and independent European oversight

- Trade‑offs include service parity evolution and operational complexity

- ESC addresses key EU regulatory frameworks through sovereignty and governance characteristics

- Evidence collection requires partition‑aware approaches to maintain jurisdictional boundaries

- Decision requires balancing sovereignty needs against functionality and complexity

4. Comparison: AWS GovCloud (US) and ESC

AWS GovCloud (US) provides a useful reference point for understanding partition design patterns and operational models. Both ESC and GovCloud represent separate partitions created to address specific regulatory and sovereignty requirements, operating with distinct control planes and governance structures.

Key commonalities include partition‑based isolation ensuring complete separation from commercial AWS operations, compliance‑driven design addressing specific regulatory frameworks, and distinct operational models with specialised staffing and oversight. Both partitions require new account establishment and feature staged service availability compared to commercial regions.

The differences reflect distinct regulatory contexts and operational requirements. ESC targets EU sovereignty requirements with EU‑resident operations, whilst GovCloud addresses US federal requirements with US‑person operations. Regulatory frameworks differ significantly: ESC aligns with GDPR, NIS2, and emerging EU digital sovereignty legislation, whilst GovCloud focuses on FedRAMP, ITAR, and US federal security standards.

Access eligibility varies significantly between partitions. ESC will be available to any organisation or individual, similar to commercial AWS, enabling broad adoption for sovereignty requirements. GovCloud (US) has strict access restrictions requiring account holders to be US entities incorporated to do business in the United States, based on US soil, and operated by US persons (citizens or active Green Card holders) capable of handling ITAR export‑controlled data.

Staffing and operations residency requirements vary, with ESC emphasising EU residency and governance, whilst GovCloud requires US persons for certain operations. Service availability trajectories reflect different market priorities and regulatory approval processes. Marketplace ecosystems develop independently, with different vendor participation patterns and compliance requirements.

AWS European Sovereign Cloud (ESC) operates with dedicated billing systems independent from commercial AWS, providing complete financial sovereignty and EU-resident billing operations.

AWS GovCloud (US) has significant billing limitations: all billing and cost management must be accessed through an associated standard commercial AWS account. GovCloud accounts cannot view billing directly within the GovCloud console. Cost and Usage Reports for GovCloud are only available in the commercial partition, and Savings Plans must be purchased through the commercial account to apply to GovCloud usage.

Summary:

- Both partitions address sovereignty through separate control planes and governance

- Regulatory contexts differ significantly between US federal and EU sovereignty requirements

- Operational models reflect distinct staffing and oversight requirements

- Service and marketplace evolution follows different trajectories based on market needs

4.1 Service Availability Comparison

AWS maintains an official, up-to-date service and feature availability list for the European Sovereign Cloud. Use the link below to explore available services, filter by category, and compare across partitions:

AWS European Sovereign Cloud — Service & Feature Availability

5. Adoption Scenarios and Decision Framework

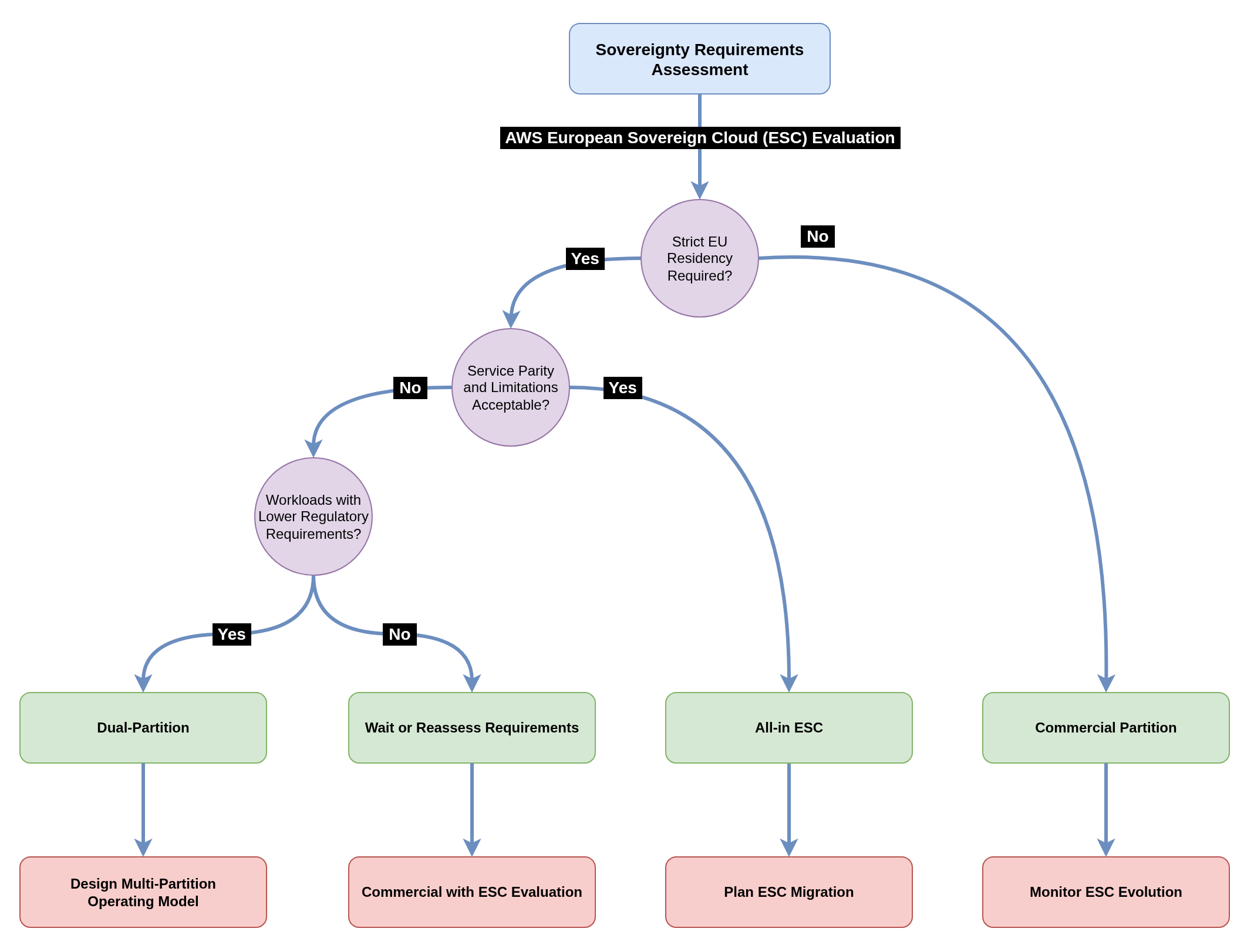

Organizations face three primary adoption scenarios when considering ESC. Each scenario presents distinct advantages and challenges that must be evaluated against specific requirements for sovereignty, service availability, operational complexity, and innovation velocity.

Scenario A: All‑in on ESC provides the strongest sovereignty posture by operating exclusively within the ESC partition. This approach simplifies compliance assurance and audit evidence collection, as all operations occur within the sovereign environment. However, organizations accept service availability limitations, reduced ecosystem maturity, and geographic redundancy constraints until multiple ESC regions become available.

Scenario B: Remain in Commercial Partition maintains full service parity and access to the global AWS ecosystem. Organizations benefit from mature services, extensive marketplace offerings, and proven operational patterns. This approach may not satisfy sovereignty requirements for regulated workloads or public sector entities with strict data residency mandates.

Scenario C: Selective ESC + Commercial (Dual‑Partition) offers targeted sovereignty where regulatory requirements demand it whilst retaining innovation velocity for non‑regulated workloads. Organizations can place sensitive data and regulated processes in ESC whilst leveraging commercial AWS for development, testing, and non‑sensitive operations. This approach introduces the highest operational complexity but enables both compliance and innovation.

The following decision tree provides a starting point for evaluating ESC adoption scenarios based on core regulatory and operational drivers. While sovereignty requirements and service dependencies form the primary decision criteria, organizations must also consider additional factors including latency sensitivity, cost constraints, operational readiness, and long-term strategic objectives.

Figure 3: Decision tree mapping regulatory, architectural, and operational drivers

Figure 3: Decision tree mapping regulatory, architectural, and operational drivers

Organizations should use this decision tree as an initial assessment tool, then conduct deeper analysis of their specific requirements. Beyond the primary sovereignty and service considerations, factors such as existing multi-region compliance experience, organizational change readiness, vendor relationship complexity, and technical team capabilities all influence the optimal adoption path. The decision tree simplifies complex trade-offs to provide directional guidance, but each organization's unique context requires thorough evaluation of all relevant considerations.

Summary:

- Three primary scenarios address different sovereignty and operational priorities

- All‑in ESC maximises sovereignty but limits service availability

- Dual‑partition enables targeted sovereignty with operational complexity trade‑offs

- Decision framework should weigh compliance needs against service and operational requirements

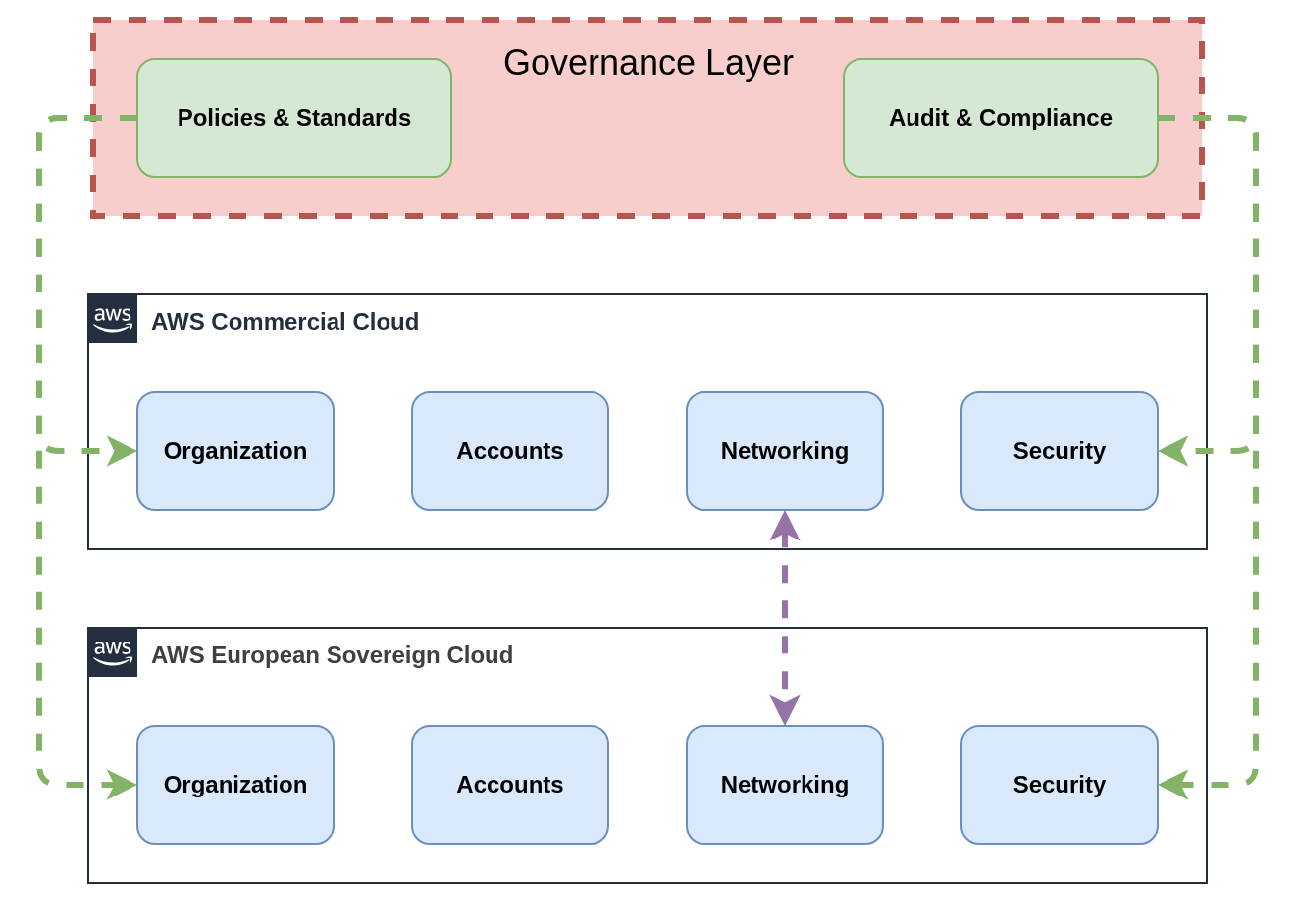

6. Operating Across Multiple AWS Partitions

Operating across both commercial AWS and ESC (or any other partition) requires deliberate organisational design, clear ownership models, and partition‑aware operational procedures. The dual‑partition approach shares many characteristics with multi‑cloud strategies, introducing similar complexity patterns whilst offering unique value propositions that make the operational overhead worthwhile for organizations with sovereignty requirements.

The Multi‑Cloud Parallel: Like multi‑cloud environments, dual‑partition operations present a "best of both worlds" opportunity alongside significant complexity challenges. Organizations gain access to innovation velocity from commercial AWS, with its rapid service development, extensive marketplace, and mature ecosystem, whilst meeting strict compliance requirements through ESC's EU‑sovereign operations. However, this approach introduces the familiar multi‑cloud challenges of disparate service portfolios, configuration drift risks, operational complexity, and the need for unified visibility across heterogeneous environments.

Benefits: Innovation Meets Compliance: The primary value proposition lies in targeted sovereignty deployment. Regulated workloads benefit from ESC's complete EU residency and governance whilst non‑regulated workloads leverage commercial AWS's full service breadth and global reach. This enables organizations to adopt emerging AI/ML services, leverage extensive marketplace offerings, and access bleeding‑edge capabilities for development and innovation workloads, whilst ensuring that customer data processing and regulated operations meet the strictest sovereignty requirements.

Complexity: The Multi‑Environment Tax: The operational overhead mirrors multi‑cloud challenges but with partition‑specific constraints. Service availability differences require constant gap analysis and alternative implementations. Configuration drift becomes a persistent risk as changes in one partition may not replicate to the other, demanding robust automation and governance processes. Consolidated visibility requires external tooling and aggregation platforms, as native AWS observability services cannot span partition boundaries. Organizations must maintain duplicate foundational infrastructure, manage separate billing relationships, and develop partition‑aware operational procedures.

The operating model establishes ownership boundaries and responsibilities across partitions. Platform teams typically own the foundational infrastructure in both partitions, including account vending, networking, and security tooling. Application teams focus on workload deployment within appropriate partitions based on data classification and regulatory requirements. Security and compliance teams develop partition‑specific policies whilst maintaining consistent control objectives.

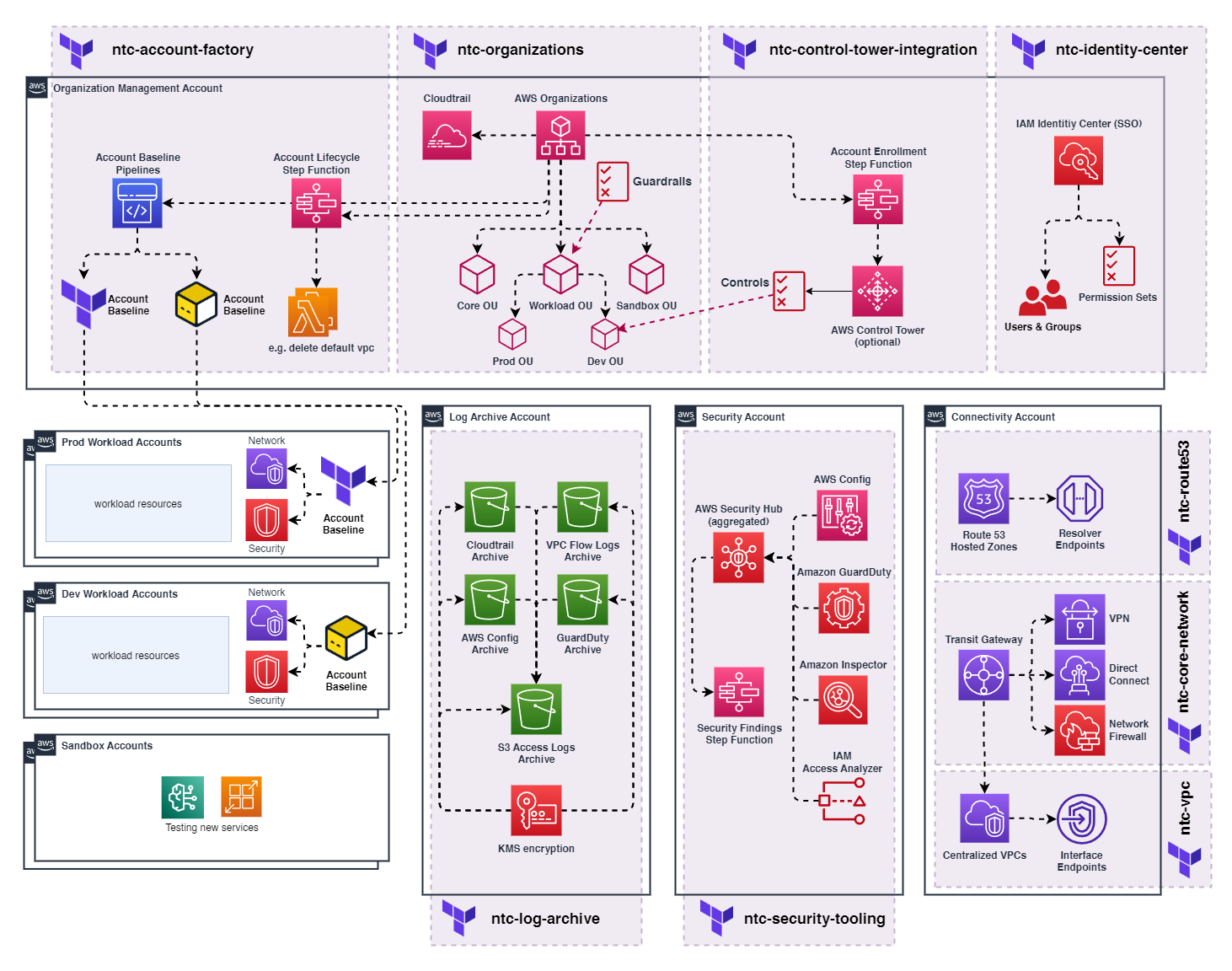

Figure 4: Reference operating model for two partitions

Figure 4: Reference operating model for two partitions

6.1 Risks and Anti‑Patterns

Multi‑partition operations introduce specific risks that require active monitoring and mitigation. Understanding these risks and avoiding common anti‑patterns enables successful long‑term operations across partition boundaries.

Primary Risk Categories: Top risks include tooling fragmentation leading to operational blind spots and increased complexity, hidden cross‑partition data flows that violate sovereignty boundaries or compliance requirements, and configuration drift between partitions creating security vulnerabilities or operational inconsistencies. Service parity evolution risks include dependency on services that may not migrate to ESC or features that differ between partitions.

Cost and Security Escalation: Cost escalation risks emerge from duplicate infrastructure, increased licensing, and operational overhead. Security risks include credential sharing across partitions, misconfigured cross‑partition networking, and inconsistent security policies. Operational risks encompass incident response complexity, knowledge fragmentation across teams, and reduced operational efficiency due to partition constraints.

Critical Anti‑Patterns to Avoid:

- Sharing IAM credentials or access keys across partitions violates security boundaries and complicates audit trails

- Unmanaged public endpoints without proper authentication create security vulnerabilities and potential data exfiltration risks

- Copy‑paste Infrastructure as Code without partition‑aware configuration leads to deployment failures and maintenance overhead

- Ignoring service parity differences leads to deployment failures when services or features are unavailable

- Bypassing approved connectivity patterns creates security risks and operational complications

Mitigation Strategies: Mitigation strategies include automated policy enforcement through Service Control Policies and guardrails, regular configuration audits to detect drift and non‑compliance, partition‑aware monitoring and alerting to maintain operational visibility, and comprehensive documentation of approved patterns and procedures.

| Risk | Likelihood | Impact | Indicator | Mitigation |

|---|---|---|---|---|

| Tooling Fragmentation | High | Medium | Multiple tool instances | Unified dashboards, automation |

| Cross‑Partition Data Flows | Medium | High | Unexpected network traffic | Network monitoring, Data Loss Prevention |

| Configuration Drift | High | Medium | Policy violations | Automated compliance scanning |

| Service Parity Issues | Medium | High | Deployment failures | Service matrix tracking |

| Cost Escalation | Medium | Medium | Budget variance | Unified cost reporting |

Summary:

- Dual‑partition operations require clear ownership models and governance boundaries

- Organisational structures can mirror across partitions or maintain separate hierarchies

- AWS operational constraints in ESC affect customer incident response and change management

- Multi‑partition operations introduce systematic risks requiring active management

- Anti‑patterns like credential sharing and unmanaged endpoints create significant security risks

- Mitigation requires automated enforcement, regular auditing, and comprehensive monitoring

- Success depends on deliberate risk management and adherence to approved patterns

7. Lessons Learned from Multi‑Region Compliance Strategies

Many organizations have implemented multi‑region compliance strategies to address data residency and regulatory requirements. These experiences provide valuable insights for multi‑partition architectures, though partition boundaries introduce harder constraints than regional separation.

Regulated industries often run sensitive workloads in specific regions (such as Zurich for Swiss government or financial requirements) whilst operating innovation workloads in broader regions like Frankfurt. This pattern establishes data classification frameworks, workload placement policies, and operational boundaries that translate to partition environments.

Key patterns that transfer to multi‑partition environments include workload classification based on data sensitivity and regulatory requirements, network segmentation ensuring traffic isolation between compliance domains, telemetry handling with appropriate data residency controls, and audit evidence collection tailored to regulatory frameworks.

Service Availability Challenges Exist in Both Models: Multi‑region compliance strategies already deal with service availability drift, particularly when using opt‑in regions. Zurich (eu‑central‑2) exemplifies this challenge. As an opt‑in region designed for Swiss data residency requirements, it offers a significantly reduced service portfolio compared to Frankfurt (eu‑central‑1). Organizations operating across Zurich and Frankfurt must architect workloads that accommodate service gaps, implement alternative solutions for missing capabilities, and manage the complexity of multi-region deployments. This mirrors the partition challenge but within the same governance domain. However, partitions introduce harder constraints that don't exist in multi‑region deployments.

The lesson for ESC adoption is that existing multi‑region compliance expertise provides a foundation, but partition‑specific patterns require additional consideration for identity, networking, and service parity management.

Summary:

- Multi‑region compliance strategies provide foundation patterns for partition architectures

- Workload classification, network segmentation, and audit evidence patterns transfer effectively

- Partition boundaries introduce harder constraints than regional separation

- Existing compliance expertise accelerates ESC adoption but requires partition‑specific adaptation

8. Core Challenges in Multi‑Partition Engineering

Multi‑partition architectures introduce specific engineering challenges that require deliberate mitigation strategies. These challenges span infrastructure management, tooling integration, service availability, cost optimisation, workload connectivity, and security operations.

Dual Landing Zone Complexity

A Landing Zone provides the foundational infrastructure and governance framework for AWS environments, including account structures, networking components, security baselines, and operational procedures that enable teams to deploy workloads safely and consistently. Two Landing Zones create duplication versus divergence risks:

- Organizations must maintain foundational infrastructure in both partitions, including account vending, networking components, and security tooling

- Configuration drift becomes a significant risk as changes in one partition may not propagate to the other

- Baseline configurations require partition‑aware templates and mechanisms to prevent divergence

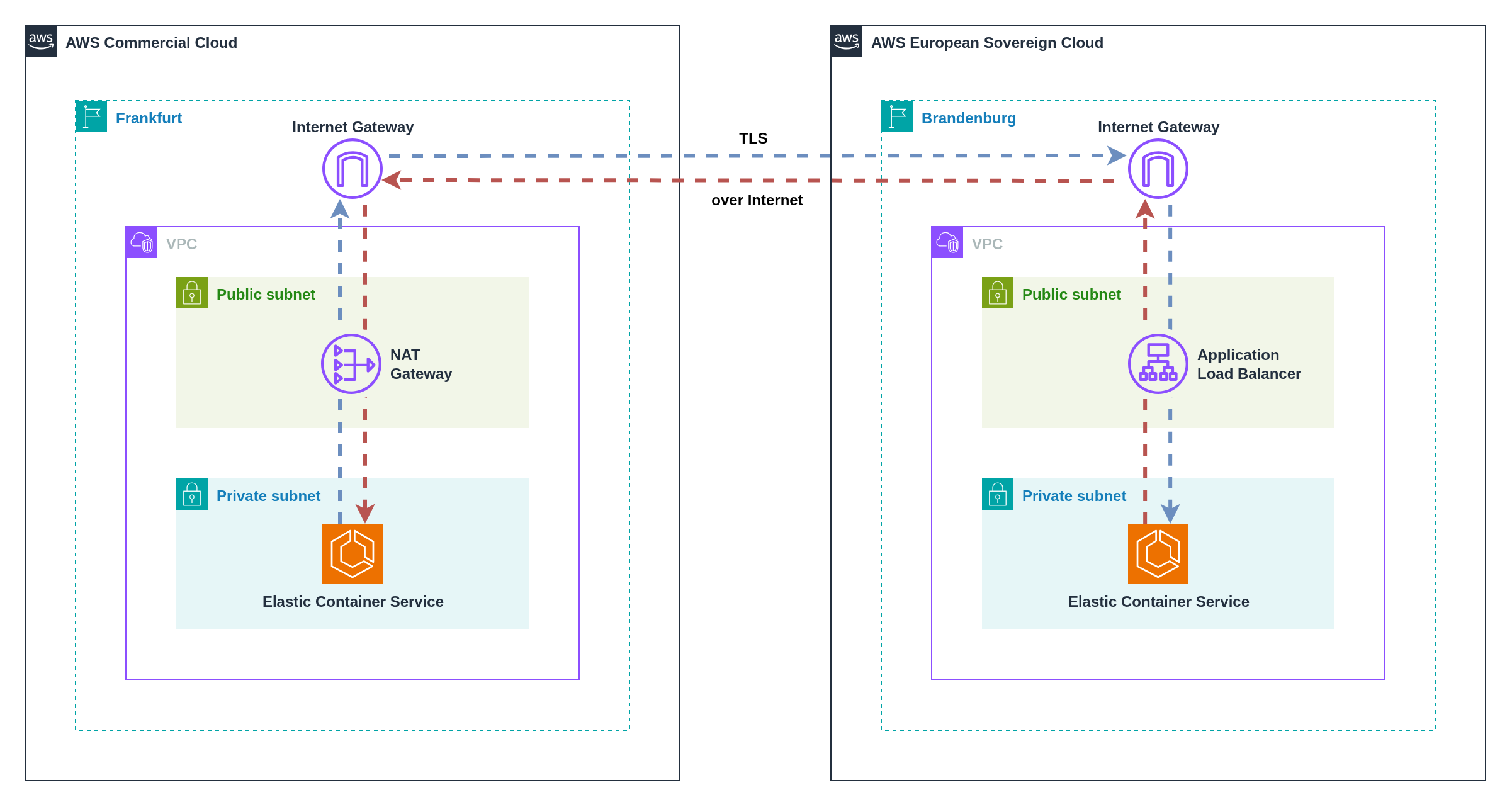

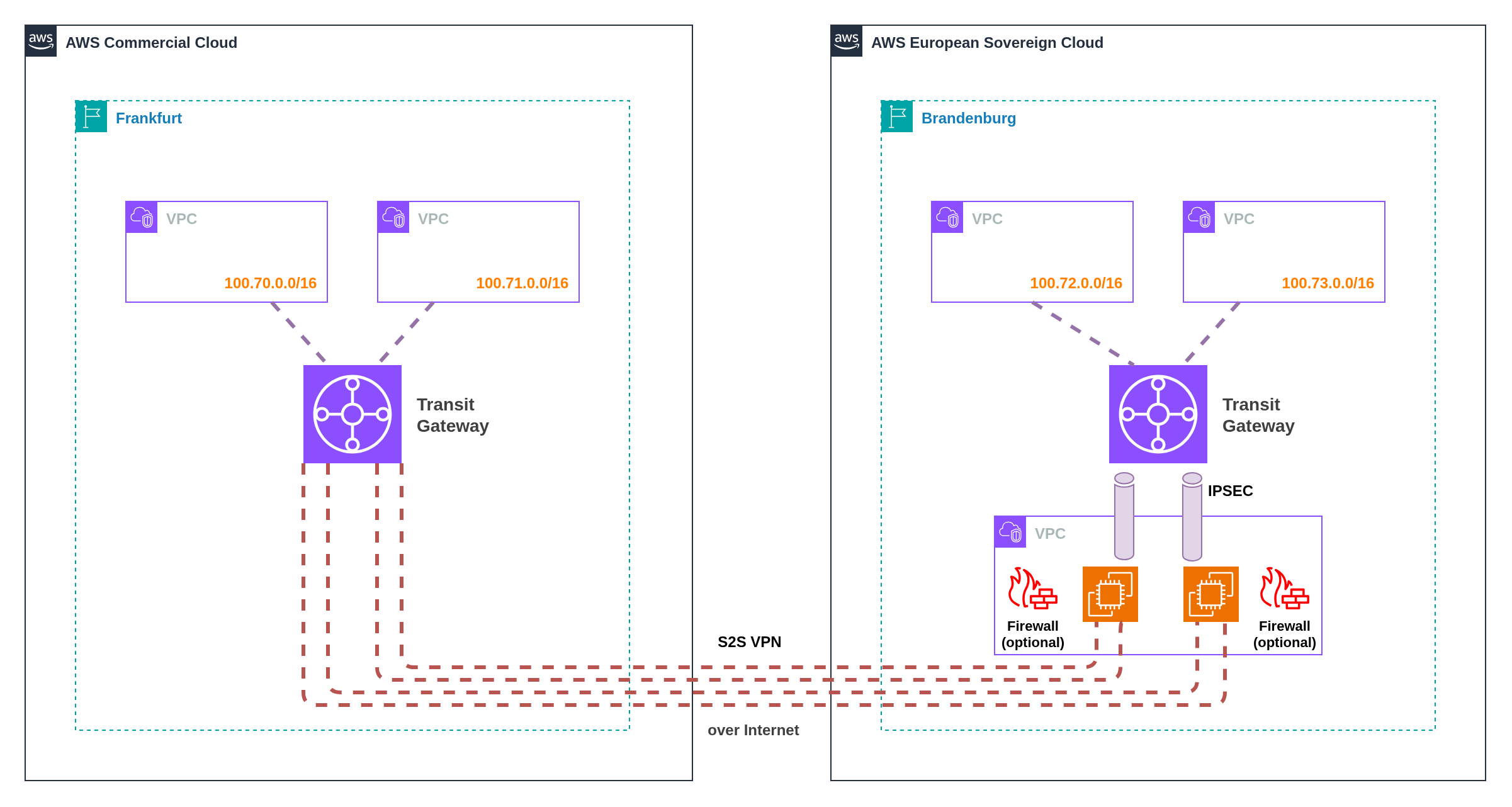

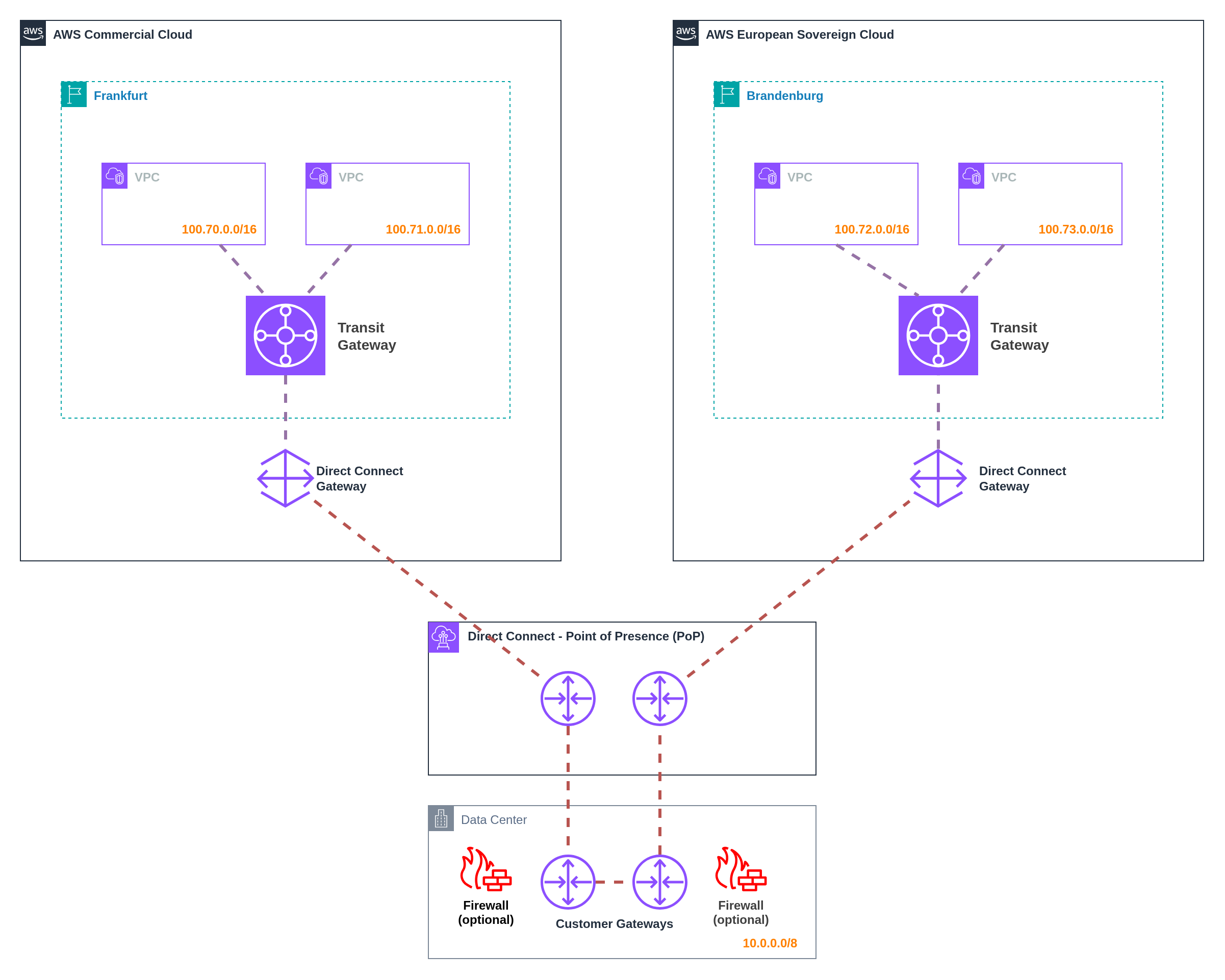

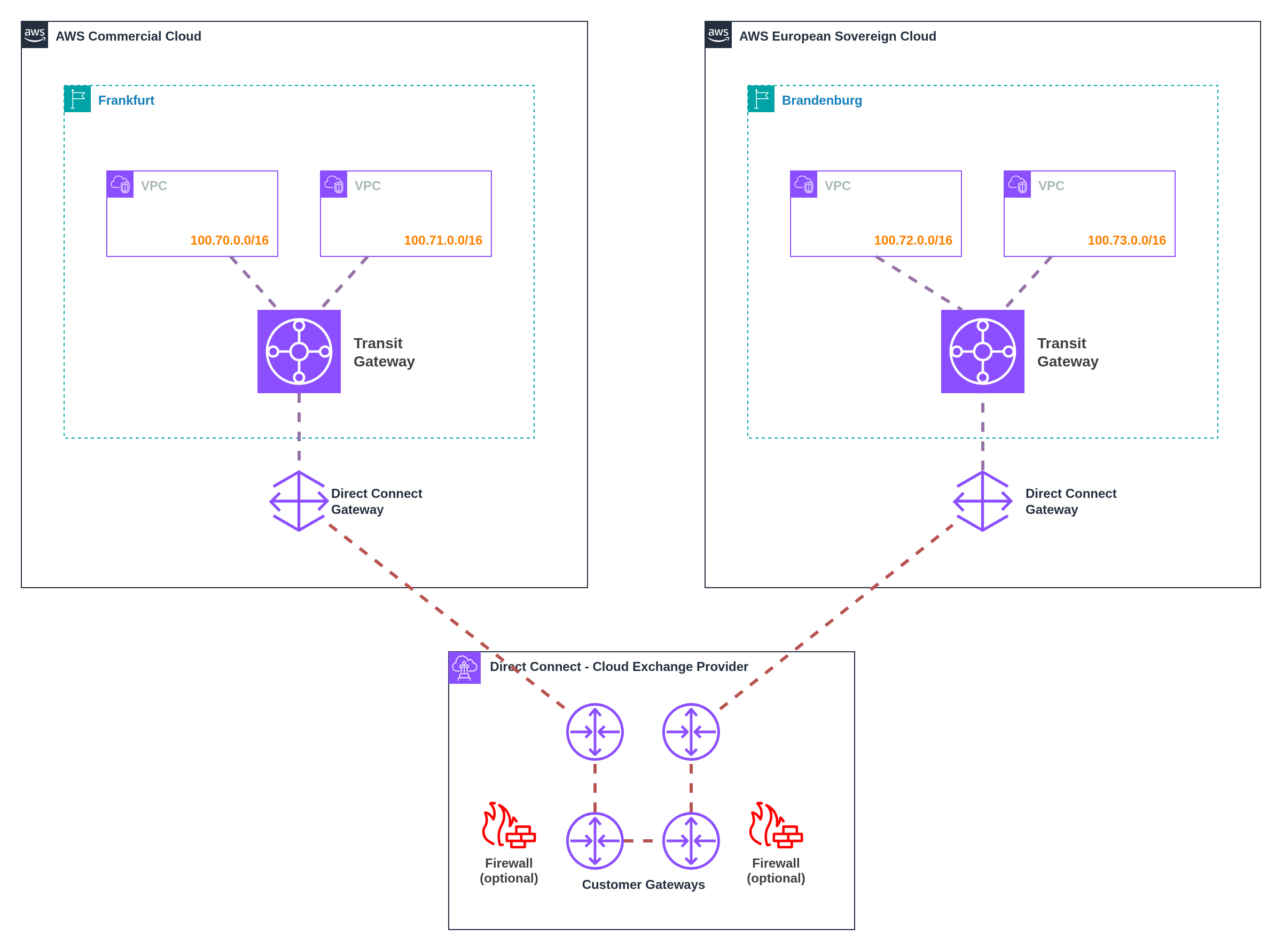

Cross‑Partition Connectivity Constraints

AWS provides no native networking mechanisms between partitions, eliminating traditional connectivity options and forcing alternative architectural patterns:

- VPC peering, Transit Gateway connectivity, and PrivateLink options are unavailable across partition boundaries

- Workload communication requires alternative patterns including application‑level APIs, VPN tunnels over the internet, or routing through on‑premises via Direct Connect

- Each approach introduces latency, complexity, and potential security considerations that must be carefully evaluated

Security Operations Fragmentation

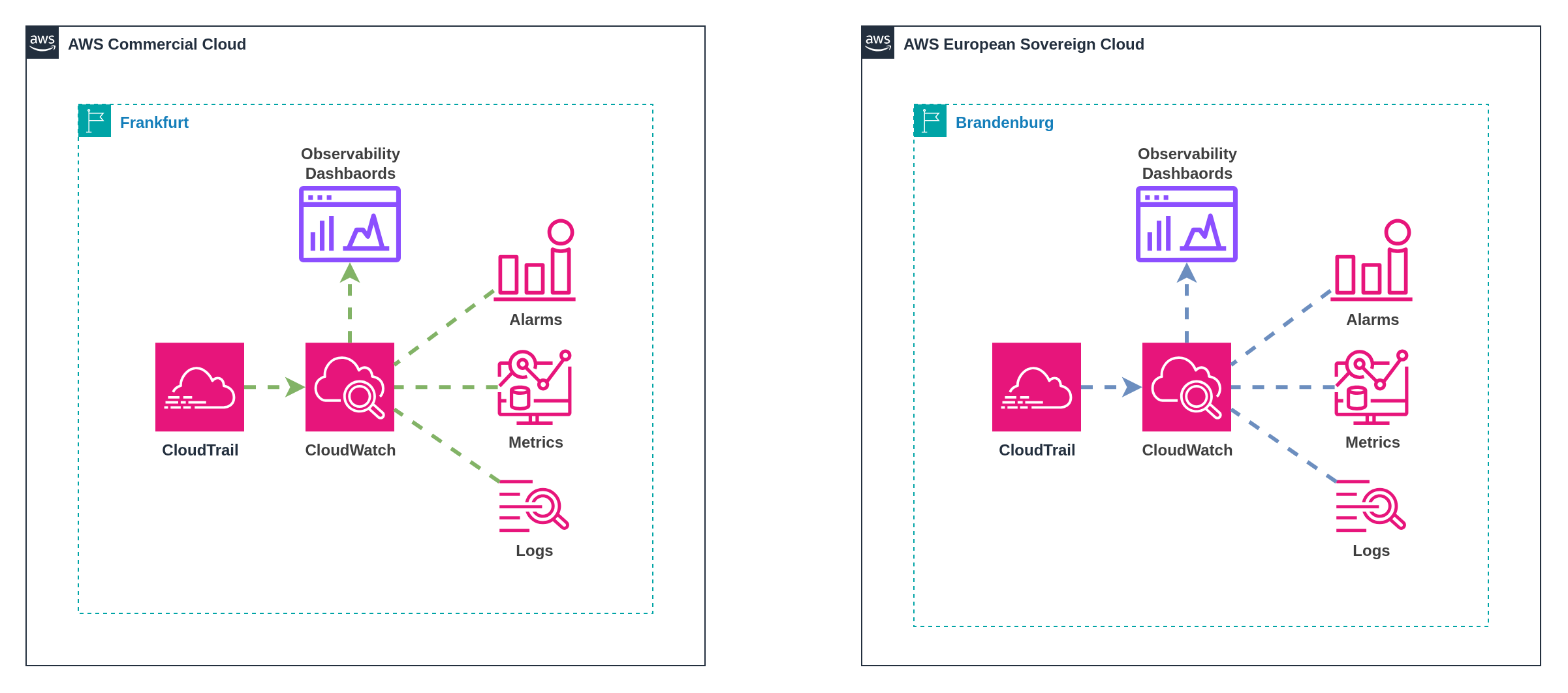

Unified security operations become significantly more complex across partition boundaries, requiring external systems and processes:

- Security services operate independently within each partition, requiring external aggregation for consolidated visibility

- Incident response procedures must accommodate different operational models and partition‑specific constraints

- Cross‑partition security correlation whilst maintaining appropriate data residency controls for telemetry originating from sovereign environments

Tooling and Integration Complexity

Tooling fragmentation affects every aspect of operations, multiplying administrative overhead and integration requirements:

- Identity systems require separate configurations for each partition with potential federation challenges

- Billing and cost management span multiple systems, complicating financial reporting and optimization

- Monitoring and SIEM solutions need partition‑specific deployments with aggregation capabilities

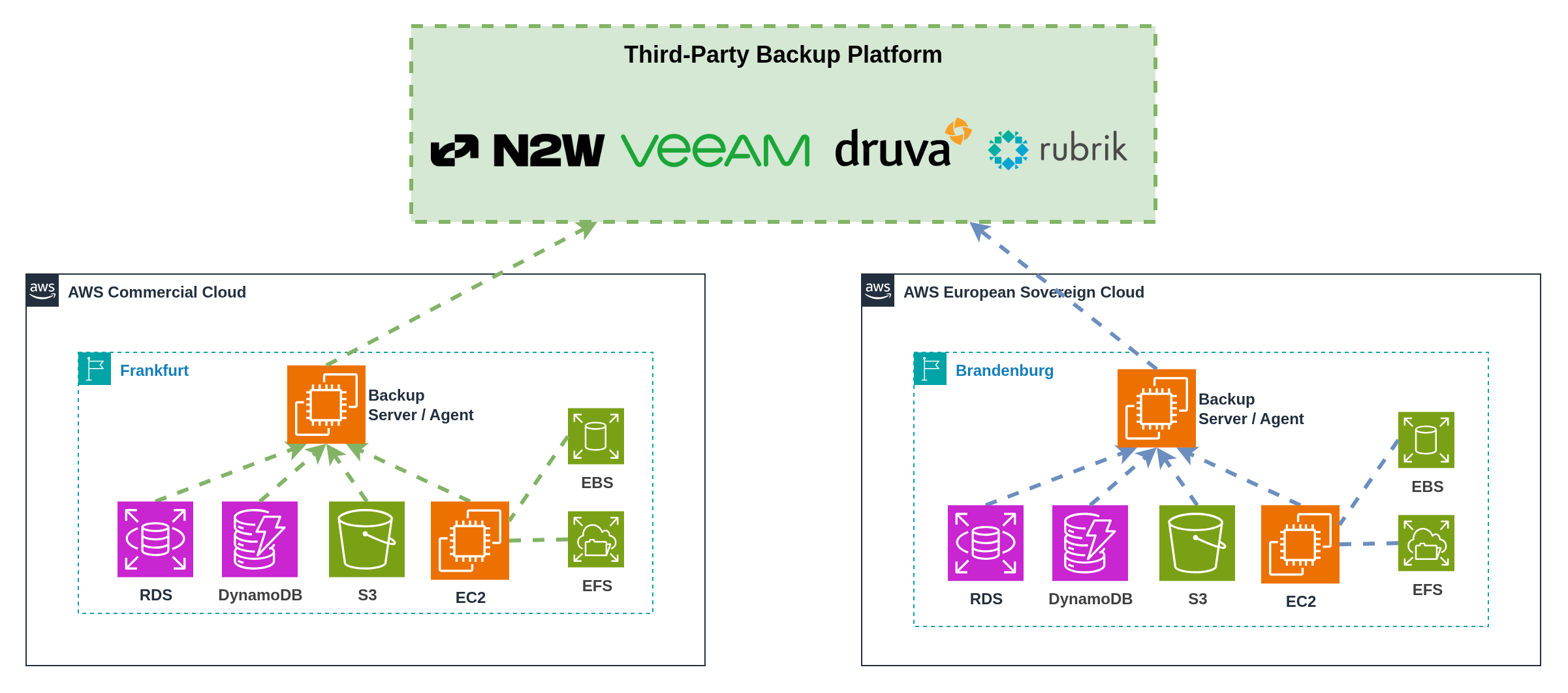

- Backup and disaster recovery solutions must accommodate partition boundaries and data residency requirements

Service Parity Drift

Service availability differences create ongoing architectural and operational challenges as partitions evolve at different rates:

- Commercial AWS introduces new services and features continuously, whilst ESC service availability evolves based on regulatory approval and operational readiness

- Organizations must maintain service parity matrices and track capability gaps between partitions

- Development teams need workarounds for missing capabilities and migration paths as services become available

- Feature inconsistencies can create technical debt and limit architectural flexibility

Cost and Procurement Complexity

Multi‑partition operations introduce financial and procurement challenges that extend beyond technical considerations:

- Organizations need to manage separate billing relationships and potentially different pricing models

- Software licence entitlements may not transfer between partitions, requiring duplicate procurement

- Marketplace availability is anticipated to differ, potentially affecting vendor relationships and sourcing strategies

- Cost attribution and optimization become more complex across multiple billing systems and governance domains

| Challenge | Impact | Mitigation |

|---|---|---|

| Dual Landing Zones | Configuration drift, duplicate maintenance | Shared configuration, partition‑aware IaC templates |

| Cross‑partition connectivity | Limited networking options, latency, complexity | Application APIs, VPN tunnels, on-premises routing |

| Unified security operations | Fragmented visibility, complex incident response | cross-partition aggregation, partition‑aware playbooks |

| Tooling fragmentation | Operational overhead, licence proliferation | Aggregated dashboards, centralised identity |

| Service parity drift | Feature gaps, technical debt | Service matrix tracking, architectural flexibility |

| Cost complexity | Split billing, unclear attribution | Unified reporting, partition‑aware tagging |

Summary:

- Multi‑partition engineering introduces systematic complexity across all operational domains

- Configuration management and tooling fragmentation require architectural solutions

- Service parity drift demands ongoing tracking and flexible architectural patterns

- Cost and procurement complexity needs unified reporting and governance approaches

9. Multi-Partition Integration Patterns (Blueprints)

This chapter presents seven comprehensive pattern areas that address the core integration challenges in multi-partition environments. Each pattern area provides detailed implementation approaches, trade-off analysis, and practical guidance for organizations managing infrastructure across AWS partitions.

Each pattern area includes:

- Multiple implementation options with detailed pros/cons analysis

- Comprehensive comparison matrices for pattern selection

- Practical code examples and configuration guidance

- Real-world considerations including costs, complexity, and operational impact

- ESC-specific constraints and service availability considerations

9.1 Landing Zone and Account Vending

A Landing Zone provides the foundational infrastructure and governance framework for AWS environments, including account structures, networking components, security baselines, and operational procedures that enable teams to deploy workloads safely and consistently. Multiple partitions require multiple Landing Zones, leading duplication and divergence risks:

- Organizations must maintain foundational infrastructure in both partitions, including account vending, networking components, and security tooling

- Configuration drift becomes a significant risk as changes in one partition may not propagate to the other

- Baseline configurations require partition‑aware templates and mechanisms to prevent divergence

Managing Landing Zones across partitions requires careful consideration of operational models that balance governance consistency with partition isolation. Organizations face two primary approaches based on their Infrastructure as Code maturity and tolerance for operational complexity.

Infrastructure as Code (IaC) is absolutely essential for managing multi-partition Landing Zones and account vending without operational failure. The complexity of maintaining consistent configurations across separate AWS partitions makes manual management approaches unsustainable at scale.

Preventing Configuration Drift: IaC templates ensure identical configurations deploy across partitions, with version control tracking all changes and automated drift detection identifying deviations between partitions.

Compliance Parity Assurance: Template-driven deployments guarantee that compliance controls remain consistent across partitions, enabling auditors to verify control implementation through code review rather than environment-by-environment inspection.

Operational Scalability: Automated account vending and baseline deployment workflows ensure consistent user experiences and security configurations without manual intervention or partition-specific procedures.

Change Management: Centralized IaC repositories with proper branching strategies and approval workflows ensure that changes undergo review before deployment to either partition, reducing the risk of introducing inconsistencies.

AWS Control Tower requires separate deployment in each partition, as it operates within the scope of a single AWS Organization and cannot manage resources across partition boundaries.

Landing Zone Accelerator on AWS (LZA) has the same limitation - it maps to a single AWS Organization and requires duplication of configurations for multi-partition deployments. Organizations using LZA must maintain separate configuration files and operational procedures for each partition.

Multi-Partition Implications:

- Each partition requires its own Control Tower setup with separate organizational unit structures, guardrails, and account baselines

- Account vending becomes a separate process for each partition

- Configuration synchronization between partitions requires manual coordination or custom automation

- Operational overhead increases significantly compared to single-partition deployments

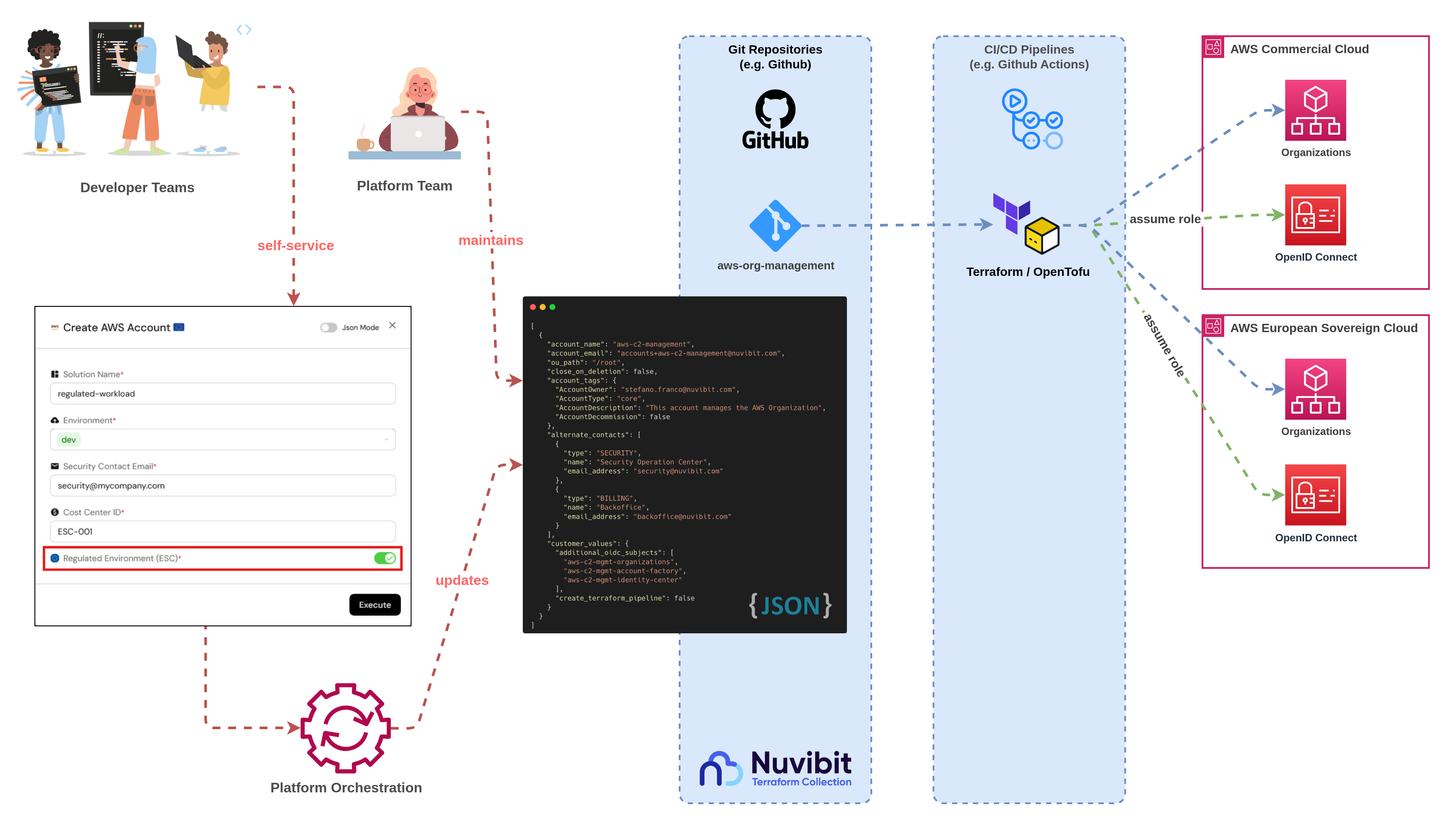

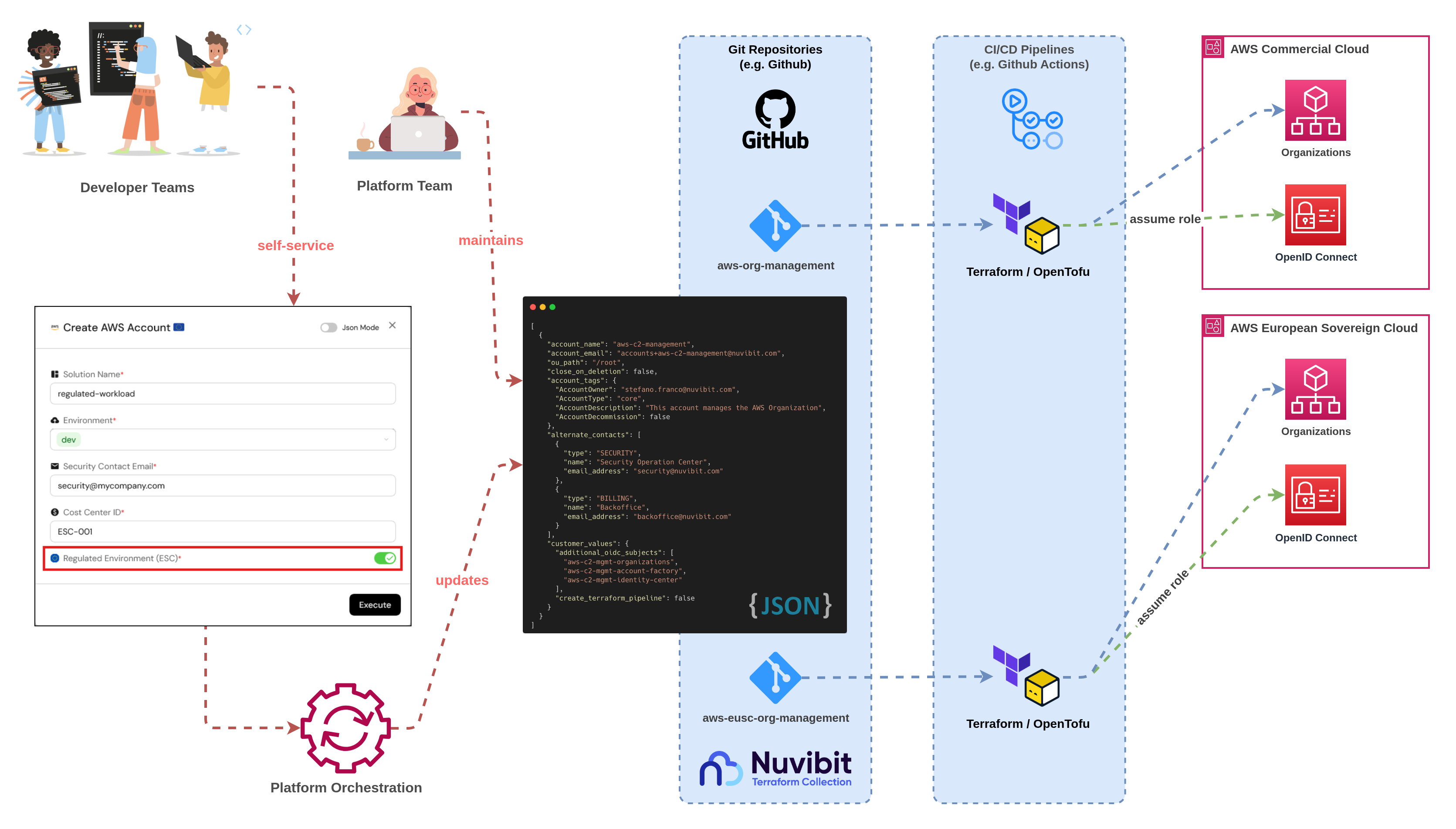

Mitigation Strategy: Organizations should evaluate Terraform/OpenTofu-based Landing Zone solutions like the Nuvibit Terraform Collection (NTC) that natively support multi-partition deployment through provider aliasing and shared configuration templates.

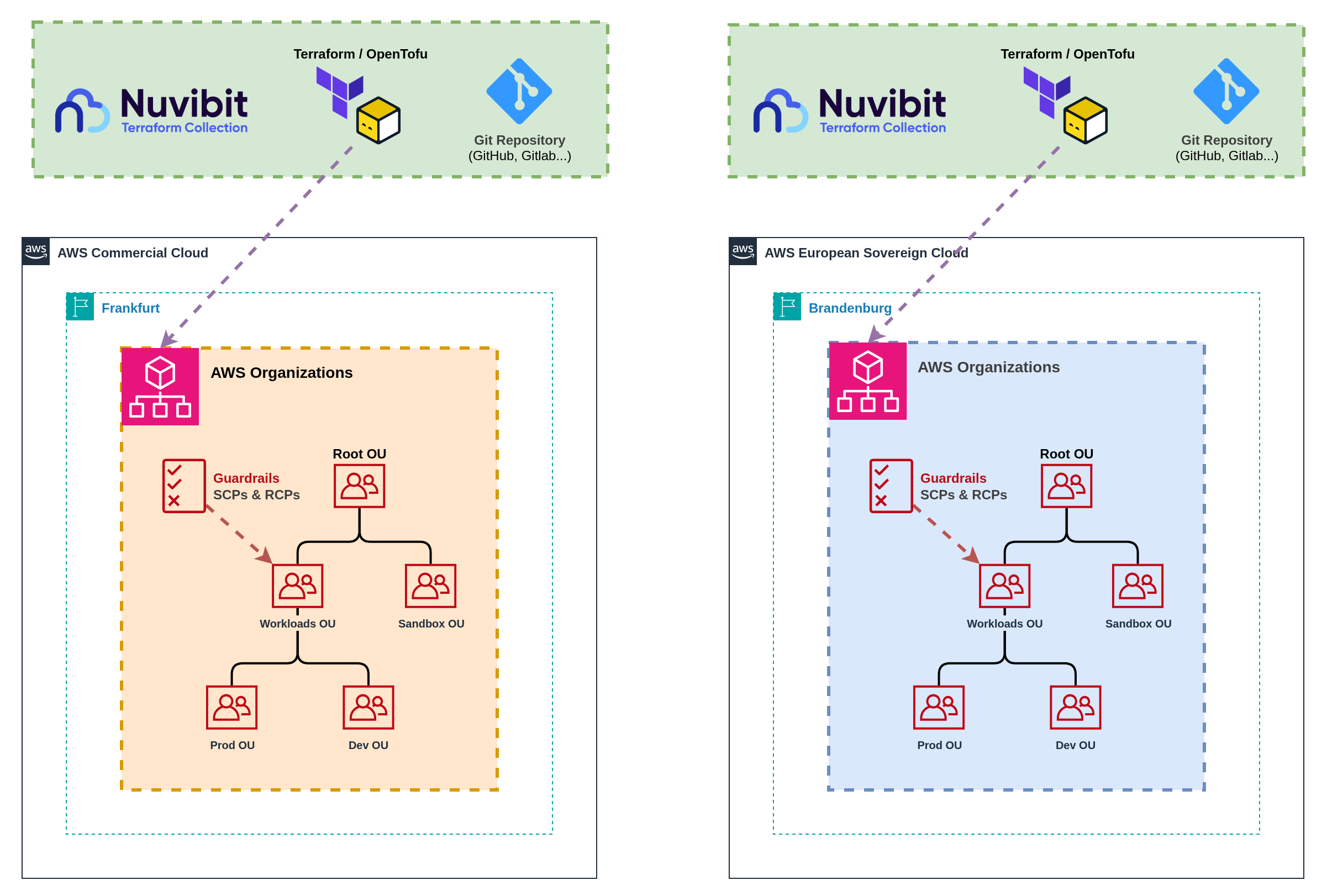

Pattern 1 Independent Landing Zone Operations

- Nuvibit Terraform Collection (NTC)

- Landing Zone Accelerator on AWS (LZA)

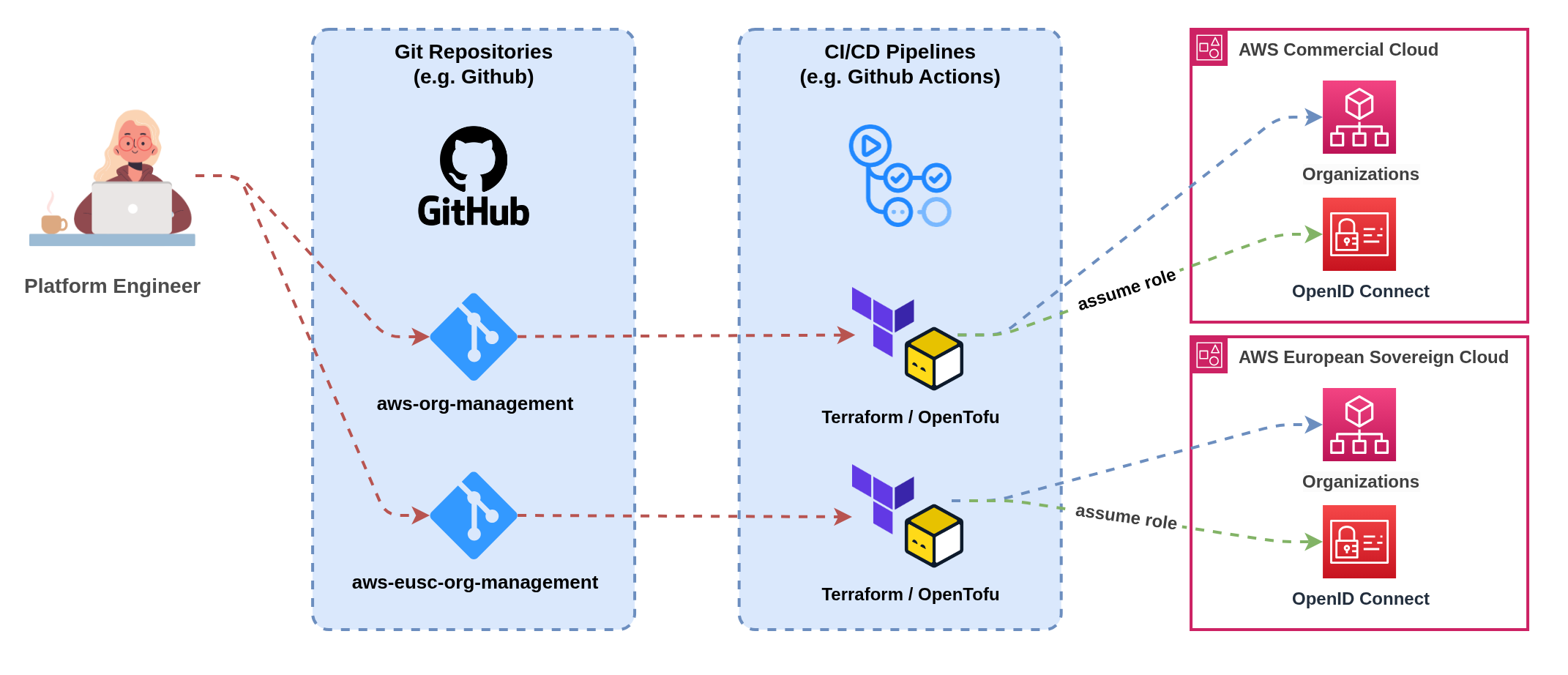

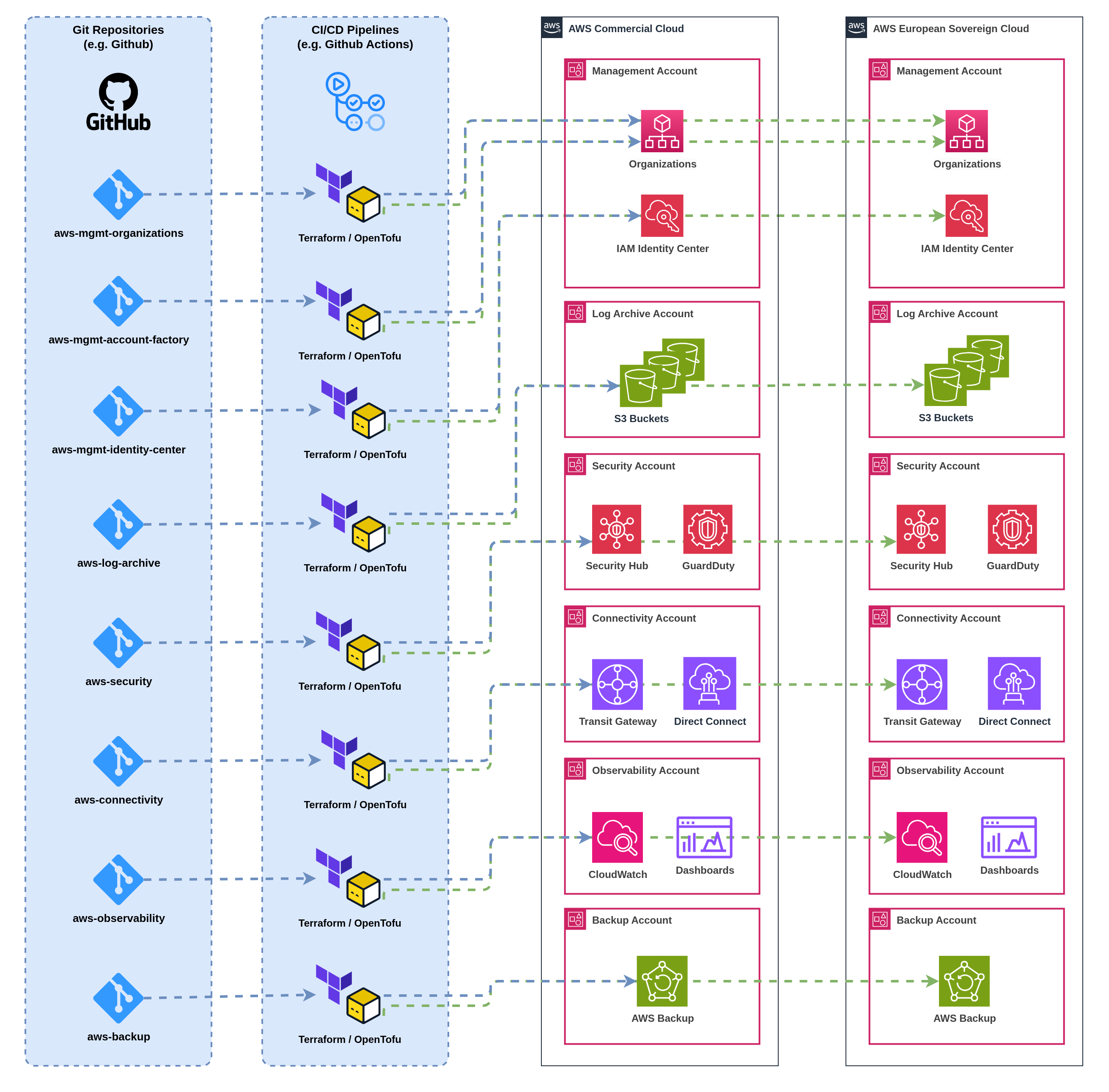

Figure 5a: Completely independent Landing Zone management

Figure 5a: Completely independent Landing Zone management

Figure 5b: Completely independent Landing Zone management

Figure 5b: Completely independent Landing Zone management

This pattern maintains completely independent Landing Zone deployments with separate Infrastructure as Code pipelines, governance processes, and operational procedures for each partition. Each partition operates its own Landing Zone with isolated account vending, baseline configurations, and organizational structures.

Implementation: Deploy separate Landing Zone solutions (Landing Zone Accelerator on AWS, Nuvibit Terraform Collection) in each partition with independent configuration management. Each partition maintains its own organizational unit structures, Service Control Policies, and account baselines without shared components or cross-partition coordination.

Account Vending: Separate account vending processes operate independently in each partition, requiring users to request accounts through partition-specific interfaces. Account baseline configurations may differ between partitions due to service availability differences and independent configuration management.

Infrastructure as Code Approaches:

- Nuvibit Terraform Collection (Terraform/OpenTofu): Separate Terraform configurations with independent state management and deployment pipelines for each partition. Requires careful version management and testing across partitions.

- Landing Zone Accelerator on AWS (CloudFormation): Requires complete duplication of configuration files for each partition. Each partition's CloudFormation stacks operate independently with separate parameter files and organizational mappings.

Benefits: Maximum partition isolation with no cross-partition dependencies, simplified compliance boundaries with clear separation of concerns, independent operational procedures that can accommodate partition-specific constraints, and reduced complexity in governance structures.

Challenges: Code duplication across partition configurations increases maintenance overhead, configuration drift risk without automated synchronization, separate account vending processes create user experience friction, and increased operational overhead for platform teams managing dual environments.

Operational Considerations: Platform teams require expertise in partition-specific operational constraints. Change management processes must coordinate across separate environments to maintain consistency whilst respecting partition boundaries.

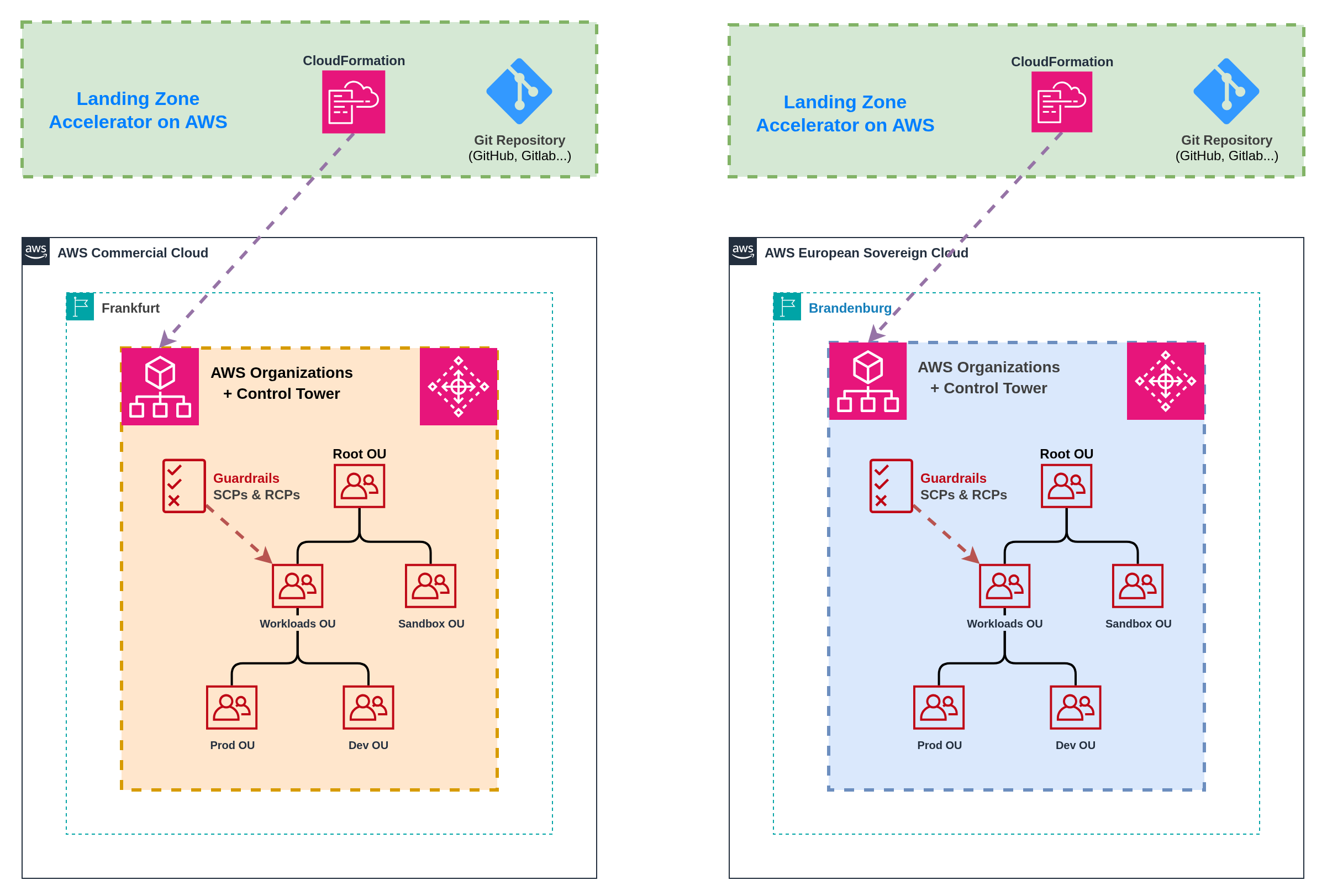

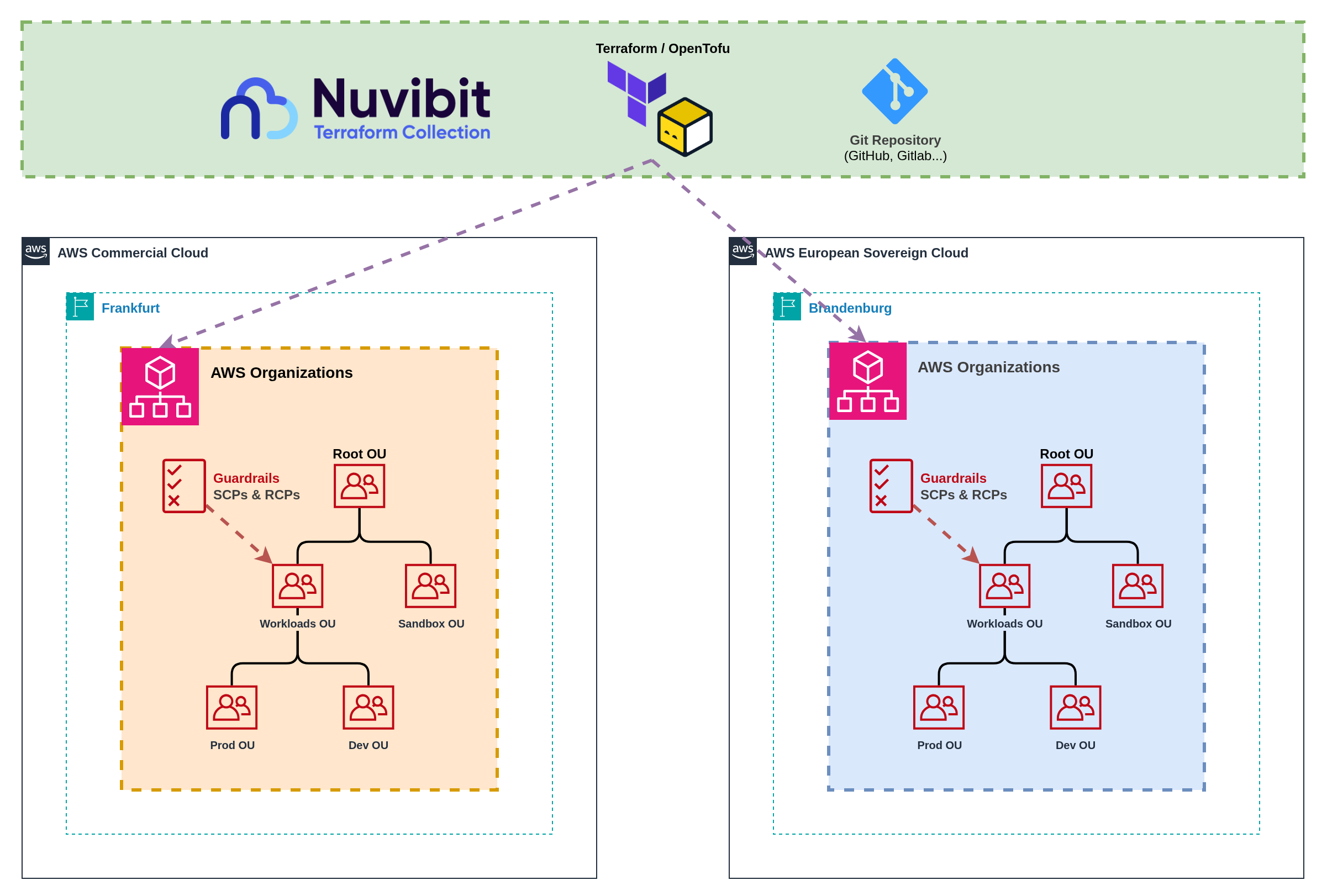

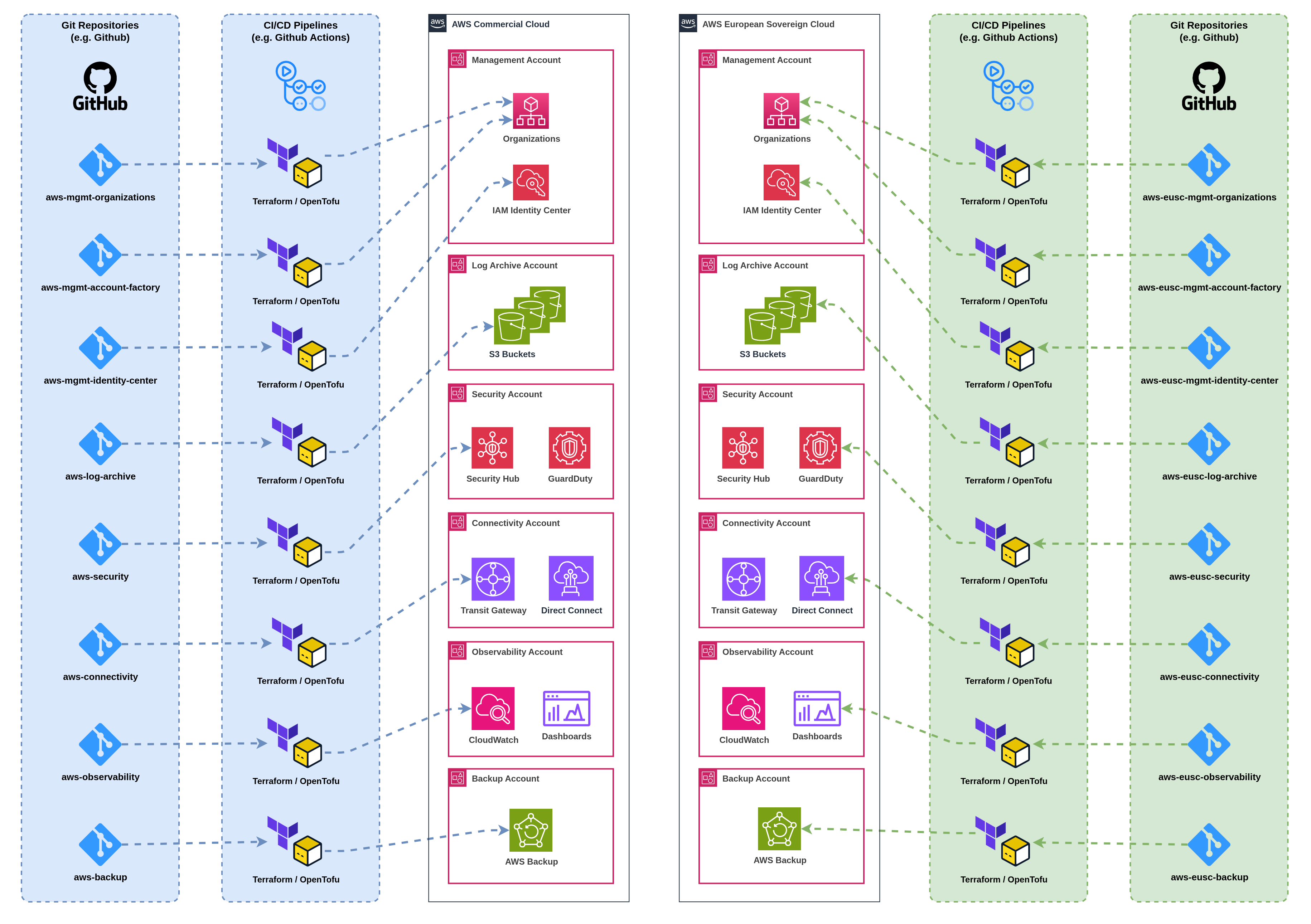

Pattern 2 Unified Landing Zone Operations

- Nuvibit Terraform Collection (NTC)

- Landing Zone Accelerator on AWS (LZA)

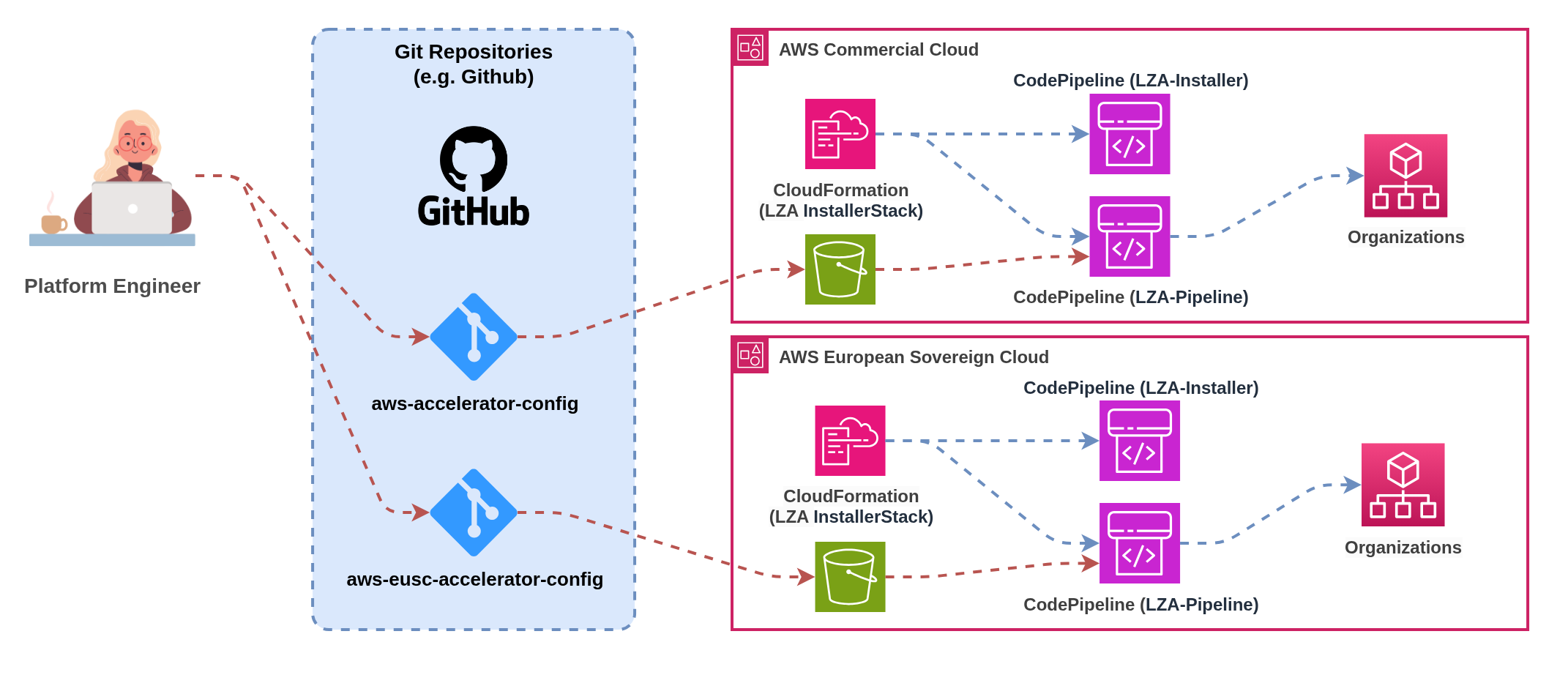

Figure 6a: Shared configuration with unified deployment pipelines

Figure 6a: Shared configuration with unified deployment pipelines

Landing Zone Accelerator on AWS cannot be deployed in a unified pattern.

LZA maps to a single AWS Organization and requires complete duplication of configurations for multi-partition deployments. Each partition needs its own LZA setup with separate configuration files, organizational structures, and operational procedures.

For unified multi-partition operations, consider Terraform/OpenTofu-based solutions like the Nuvibit Terraform Collection (NTC) that natively support provider aliasing and shared configuration templates.

This pattern uses shared Infrastructure as Code configurations with unified deployment pipelines that target multiple partitions from a single source of truth. Shared templates and configurations minimize code duplication whilst maintaining partition isolation through provider aliasing and conditional logic.

Implementation: Single Infrastructure as Code repository with partition-aware templates that deploy to multiple AWS Organizations simultaneously. Shared organizational unit structures, policy definitions, and baseline configurations adapt to partition-specific service availability through feature flags and conditional resource creation.

Account Vending: Unified account vending interface abstracts partition complexity while routing requests to appropriate partition-specific provisioning logic. Single account request workflow with partition selection capability, shared approval processes, and consistent user experience across partitions.

Infrastructure as Code Approaches:

- Nuvibit Terraform Collection (Terraform/OpenTofu): Native support for multi-partition deployment through provider aliasing, shared module libraries, and conditional resource creation based on partition characteristics.

- Landing Zone Accelerator on AWS (CloudFormation): Not supported Landing Zone Accelerator maps to a single AWS Organization and cannot deploy across multiple partitions.

Provider Aliasing: Terraform/OpenTofu natively supports multiple AWS provider configurations in a single configuration, enabling deployment to multiple partitions simultaneously from shared templates.

Conditional Logic: Rich conditional expressions and feature flags allow templates to adapt to partition-specific service availability without code duplication.

Module Reusability: Shared module libraries with partition-aware parameters eliminate code duplication whilst maintaining consistency across partitions.

Cross-Partition Resources: Single Terraform/OpenTofu configuration can manage resources across partitions, enabling unified account vending and baseline deployment workflows.

Benefits: Minimized code duplication through shared configurations reduces maintenance overhead, consistent organizational structures and policies across partitions, unified account vending provides superior user experience, and single source of truth for governance configurations.

Challenges: Increased complexity in template design with partition-aware logic, dependency on Infrastructure as Code tooling that supports multi-partition deployment, potential for cascading failures affecting multiple partitions, and higher skill requirements for platform teams managing shared configurations.

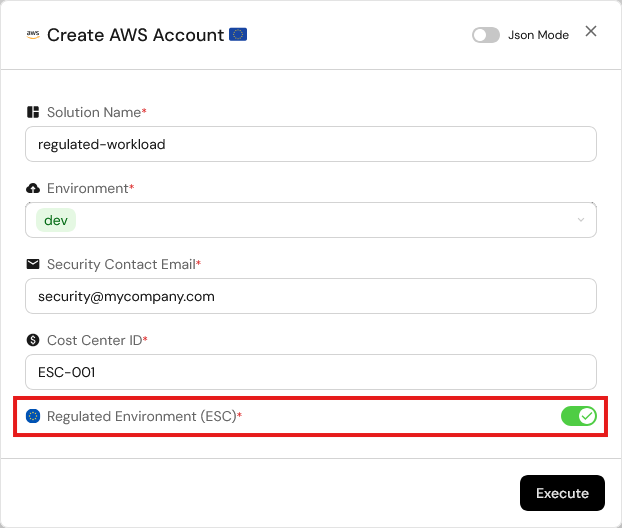

Account Vending Workflow: Users request accounts through a single interface with partition selection based on workload classification and data residency requirements. Conditional logic routes requests to appropriate partition-specific account factories whilst maintaining consistent baseline configurations and approval workflows. Any self-service portal can be easily integrated via GitOps workflows, where account requests trigger git push operations to update the account factory repository, enabling automated provisioning through Infrastructure as Code pipelines.

Figure 7: Self-service account vending implementation with multi-partition support

Landing Zone Pattern Comparison

| Pattern | Best For | Pros | Cons | Operational Model | Complexity | Governance |

|---|---|---|---|---|---|---|

| 1. Independent Landing Zone Operations | Organizations prioritizing maximum partition isolation, simple governance boundaries, Landing Zone Accelerator on AWS users | ✅ Maximum partition isolation ✅ Independent operational procedures ✅ Clear compliance boundaries ✅ No cross-partition failure risk ✅ Supports any IaC approach | ❌ Code duplication overhead ❌ Configuration drift risk ❌ Separate account vending processes ❌ Increased maintenance burden ❌ User experience friction | Dual Operations Separate teams or procedures per partition | Low-Medium Independent but duplicated | Separated Independent governance per partition |

| 2. Unified Landing Zone Operations | Organizations with Terraform/OpenTofu expertise, unified operational preferences, emphasis on consistency | ✅ Minimized code duplication ✅ Consistent governance structures ✅ Unified account vending ✅ Single source of truth ✅ Reduced operational overhead | ❌ Increased template complexity ❌ Cross-partition failure risk ❌ Higher skill requirements ❌ Limited to Terraform/OpenTofu ❌ Potential cascading issues | Unified Operations Single team managing both partitions | Medium-High Shared configuration logic | Unified Consistent policies across partitions |

Summary:

- Landing Zone management is the foundation of successful multi-partition operations

- Infrastructure as Code is critical for maintaining consistency and preventing configuration drift

- Pattern selection depends on organizational IaC maturity and governance preferences

- Terraform/OpenTofu provides significant advantages over CloudFormation for multi-partition architectures

- Account vending complexity varies significantly between separated and aggregated approaches

9.2 Infrastructure as Code and CI/CD

Managing Infrastructure as Code across partitions requires partition‑aware templates, dynamic resource configuration, and careful handling of partition‑specific constraints. The primary challenge lies in creating reusable configurations that adapt to different partition characteristics without hardcoding partition‑specific values.

Infrastructure as Code: The Foundation for Multi-Partition Consistency

Infrastructure as Code is absolutely critical for managing consistent configurations across partitions whilst accommodating service availability differences and partition‑specific constraints. Manual configuration management becomes unsustainable when dealing with partition boundaries and service parity gaps.

Preventing Hardcoded Partition Dependencies: Existing IaC code often contains hardcoded ARNs, service endpoints, or partition identifiers that break when deployed across different partitions. Templates must use dynamic resource references and partition‑aware logic to ensure portability.

Partition-Aware Resource Configuration: IaC modules include partition detection logic that adapts resource configurations based on target partition characteristics. Feature flags handle service availability differences, disabling resources or substituting alternatives when services are unavailable in specific partitions.

Dynamic Service Discovery: Rather than hardcoding service endpoints or ARNs, templates use data sources and dynamic references to discover partition‑appropriate resources and endpoints at deployment time.

Avoiding Hardcoded Partition Dependencies

Existing Infrastructure as Code often contains hardcoded references that break when deployed across different partitions. Common issues include hardcoded ARNs with partition identifiers, service endpoints using commercial AWS domains, and resource references that assume specific partition characteristics.

Terraform Example:

# Use data source for dynamic discovery

data "aws_caller_identity" "current" {}

data "aws_partition" "current" {}

locals {

partition = data.aws_partition.current.partition

account_id = data.aws_caller_identity.current.account_id

}

output "role_arn" {

# Instead of hardcoded ARN (e.g. arn:aws:iam::123456789012:role/MyRole)

value = "arn:${local.partition}:iam::${local.account_id}:role/MyRole"

}

CloudFormation Example:

# Use pseudo parameters

Outputs:

RoleArn:

# Instead of hardcoded ARN (e.g. arn:aws:iam::123456789012:role/MyRole)

Value: !Sub arn:${AWS::Partition}:iam::${AWS::AccountId}:role/MyRole

Service Endpoints Use Different Domains: AWS European Sovereign Cloud uses *.amazonaws.eu domain endpoints instead of the commercial *.amazonaws.com domains. This affects:

- API Endpoints: Service APIs use ESC-specific endpoints

- S3 Bucket URLs: S3 bucket access URLs use the ESC domain namespace

- CloudFormation Template URLs: Template and artifact references must use appropriate domain endpoints

- Third-Party Tool Configuration: External tools and integrations require ESC-specific endpoint configuration

Terraform AWS Provider Support: The Terraform AWS provider is expected to support ESC from day one, with AWS SDKs already updated for ESC compatibility. Any unexpected behavior can be tracked via GitHub Issue #44437.

CodeBuild and CodePipeline Dependencies: Landing Zone Accelerator on AWS (LZA) and Control Tower Account Factory for Terraform (AFT) requires AWS CodeBuild and CodePipeline services for its deployment automation and ongoing configuration management.

ESC Launch Service Gap: CodeBuild and CodePipeline are not currently included in the initial AWS European Sovereign Cloud launch services, making the deployment of those solutions currently impossible in ESC.

Alternative Approach: Terraform/OpenTofu-based solutions like Nuvibit Terraform Collection (NTC) can deploy to ESC immediately using available services and don't have a mandatory dependency on CodeBuild/CodePipeline.

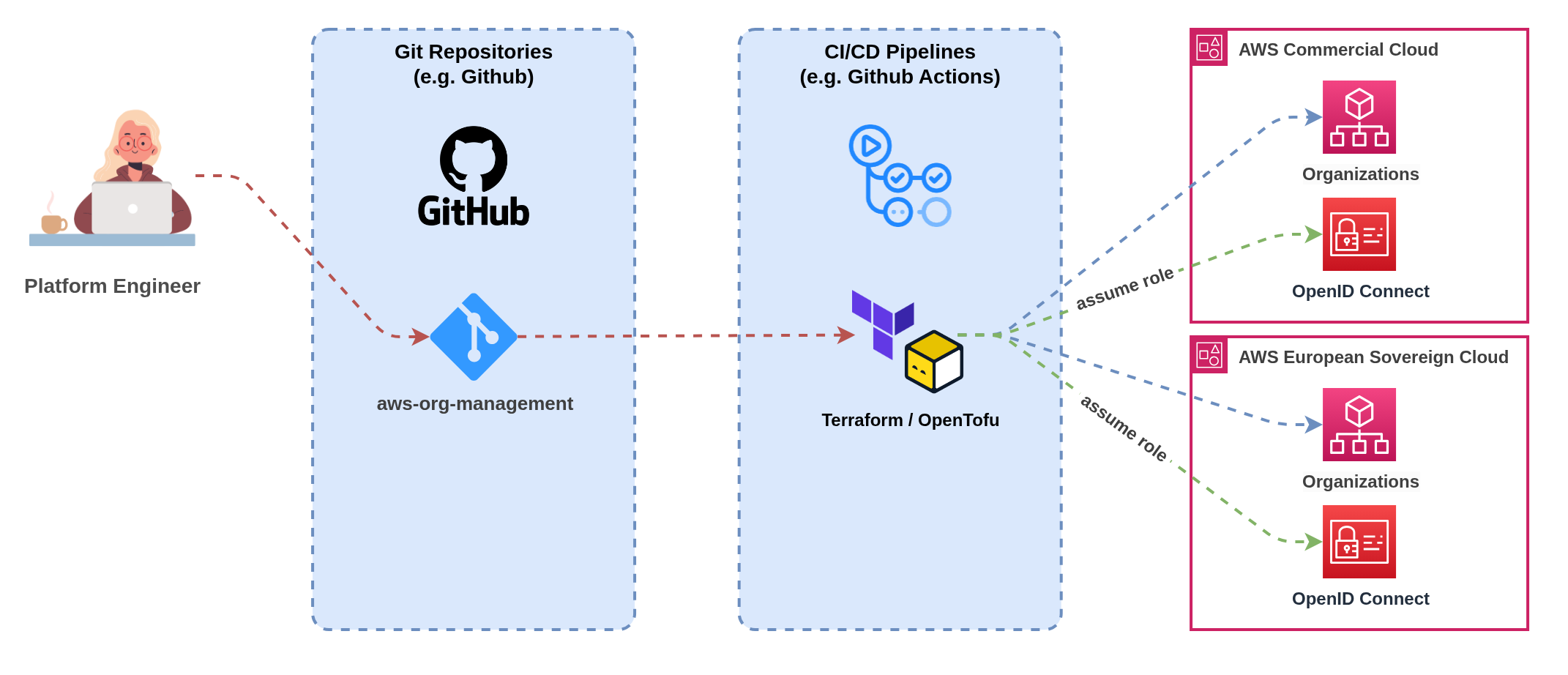

Pattern 1 Individual Pipelines per Partition

- Nuvibit Terraform Collection (NTC)

- Landing Zone Accelerator on AWS (LZA)

Figure 8a: Independent CI/CD pipelines with partition-specific deployment workflows

Figure 8a: Independent CI/CD pipelines with partition-specific deployment workflows

Figure 8b: Independent CI/CD pipelines with partition-specific deployment workflows

Figure 8b: Independent CI/CD pipelines with partition-specific deployment workflows

This pattern maintains completely separate CI/CD pipelines for each partition, providing maximum isolation and partition-specific optimisation whilst requiring careful coordination to maintain consistency across deployments.

Implementation: Deploy separate CI/CD infrastructure in each partition or use external CI/CD platforms with partition-specific pipelines. Each pipeline handles source code checkout, testing, artifact creation, and deployment for its respective partition independently. Authentication, service endpoints, and deployment targets remain partition-specific throughout the pipeline execution.

Benefits: Complete pipeline isolation with no cross-partition dependencies, partition-specific optimisation for testing and deployment strategies, clear operational boundaries aligned with compliance requirements, and independent failure domains preventing cascading issues across partitions.

Challenges: Code duplication across pipeline configurations increases maintenance overhead, configuration drift risk between partition-specific pipelines, separate artifact management requiring manual synchronisation, and increased operational complexity for platform teams managing multiple pipeline infrastructures.

Coordination Mechanisms: Version tagging strategies ensure consistent releases across partitions, shared configuration templates minimise pipeline code duplication, cross-partition promotion workflows enable testing in one partition before deploying to another, and unified monitoring provides visibility across all partition-specific pipelines.

Use OpenID Connect (OIDC) for Authentication of External Pipelines: CI/CD pipelines should use OIDC identity providers to authenticate with AWS instead of static access keys. OIDC provides temporary, scoped credentials that improve security and eliminate the need to manage long-lived access keys in pipeline configurations.

ESC OIDC Configuration: For AWS European Sovereign Cloud, ensure your OIDC identity provider is configured with the ESC-specific STS endpoint:

- ESC STS Endpoint:

sts.eusc-de-east-1.amazonaws.eu(Brandenburg region) - Commercial STS Endpoint:

sts.amazonaws.com(default)

Implementation: Configure your CI/CD platform (GitHub Actions, GitLab CI, Azure DevOps, etc.) to assume IAM roles via OIDC rather than using stored AWS access keys. This provides automatic credential rotation, improved audit trails, and eliminates credential storage security risks.

Pattern 2 Unified Pipelines for Multi-Partition Deployment

- Nuvibit Terraform Collection (NTC)

- Landing Zone Accelerator on AWS (LZA)

Figure 9a: Single pipeline with multi-partition deployment capabilities

Figure 9a: Single pipeline with multi-partition deployment capabilities

Landing Zone Accelerator on AWS cannot be deployed in a unified pattern.

LZA maps to a single AWS Organization and requires complete duplication of configurations for multi-partition deployments. Each partition needs its own LZA setup with separate configuration files, organizational structures, and operational procedures.

For unified multi-partition operations, consider Terraform/OpenTofu-based solutions like the Nuvibit Terraform Collection (NTC) that natively support provider aliasing and shared configuration templates.

This pattern uses a single CI/CD pipeline that can deploy to multiple partitions from shared source configurations, providing operational efficiency and consistency whilst handling partition-specific deployment requirements through conditional logic and feature flags.

Implementation: Single CI/CD pipeline with partition-aware deployment stages that can target multiple AWS partitions based on configuration parameters. Pipeline includes conditional logic to handle service availability differences, partition-specific authentication mechanisms, and environment-specific deployment parameters.

Benefits: Single source of truth for deployment configurations reduces maintenance overhead, consistent deployment processes across all partitions, shared artifact management with unified promotion workflows, and reduced operational complexity through single pipeline management interface.

Challenges: Increased pipeline complexity handling multiple partition scenarios, potential cascading failures affecting multiple partitions simultaneously, higher skill requirements for teams managing multi-partition pipeline logic, and dependency on CI/CD platforms supporting complex conditional deployment workflows.

Partition-Aware Features: Dynamic endpoint configuration based on target partition, conditional resource deployment handling service availability gaps, partition-specific authentication and credential management, and environment-specific testing and validation strategies.

Example: Multi-Partition Configuration with Nuvibit Terraform Collection (NTC)

# --------------------------------------------------------------------------------------------

# ¦ PROVIDER - MULTI-PARTITION

# --------------------------------------------------------------------------------------------

provider "aws" {

alias = "aws_frankfurt"

region = "eu-central-1"

# OpenID Connect (OIDC) integration

assume_role_with_web_identity {

role_arn = "arn:aws:iam::111111111111:role/oidc-role"

session_name = "unified-pipeline"

web_identity_token_file = "/tmp/web-identity-token"

}

}

provider "aws" {

alias = "aws_eusc_brandenburg"

region = "eusc-de-east-1"

# OpenID Connect (OIDC) integration

assume_role_with_web_identity {

role_arn = "arn:aws-eusc:iam::222222222222:role/oidc-role"

session_name = "unified-pipeline"

web_identity_token_file = "/tmp/web-identity-token"

}

}

# --------------------------------------------------------------------------------------------

# ¦ LOCALS

# --------------------------------------------------------------------------------------------

# Define shared configuration that will be deployed across multiple partitions

locals {

organizational_unit_paths = [

"/root/core",

"/root/sandbox",

"/root/suspended",

"/root/transitional",

"/root/workloads",

"/root/workloads/prod",

"/root/workloads/dev",

"/root/workloads/test"

]

}

# --------------------------------------------------------------------------------------------

# ¦ NTC ORGANIZATIONS - COMMERCIAL

# --------------------------------------------------------------------------------------------

module "ntc_organizations" {

source = "github.com/nuvibit-terraform-collection/terraform-aws-ntc-organizations?ref=X.X.X"

# list of nested (up to 5 levels) organizational units

organizational_unit_paths = local.organizational_unit_paths

# additional inputs...

providers = {

aws = aws.aws_frankfurt

}

}

# --------------------------------------------------------------------------------------------

# ¦ NTC ORGANIZATIONS - ESC

# --------------------------------------------------------------------------------------------

module "ntc_organizations_esc" {

source = "github.com/nuvibit-terraform-collection/terraform-aws-ntc-organizations?ref=X.X.X"

# list of nested (up to 5 levels) organizational units

organizational_unit_paths = local.organizational_unit_paths

# additional inputs...

providers = {

aws = aws.aws_eusc_brandenburg

}

}

Multi-Partition CI/CD Pattern Comparison

| Pattern | Best For | Pros | Cons | Operational Model | Complexity | Consistency |

|---|---|---|---|---|---|---|

| 1. Individual Pipelines per Partition | Organizations prioritizing maximum partition isolation, partition-specific deployment requirements, strict compliance boundaries | ✅ Complete pipeline isolation ✅ Partition-specific optimisation ✅ Independent failure domains ✅ Clear operational boundaries ✅ Supports any CI/CD platform | ❌ Pipeline code duplication ❌ Configuration drift risk ❌ Separate artifact management ❌ Increased maintenance overhead ❌ Complex cross-partition coordination | Separated Operations Independent pipeline teams or processes | Medium Duplicated but isolated | Manual Requires coordination mechanisms |

| 2. Unified Pipelines for Multi-Partition | Organizations with advanced CI/CD maturity, emphasis on consistency, unified operational preferences | ✅ Single source of truth ✅ Consistent deployment processes ✅ Shared artifact management ✅ Reduced operational overhead ✅ Unified promotion workflows | ❌ Increased pipeline complexity ❌ Cross-partition failure risk ❌ Higher skill requirements ❌ Platform dependency for multi-partition support ❌ Potential cascading issues | Unified Operations Single team managing multi-partition pipeline | High Complex conditional logic | Automated Built-in consistency mechanisms |

Summary:

- Infrastructure as Code is essential for consistent multi-partition configuration management

- Dynamic resource discovery prevents hardcoded partition dependencies

- ESC introduces endpoint and service availability differences requiring careful handling

- CI/CD pattern selection depends on organizational maturity and isolation requirements

9.3 Identity and Access Management (SSO)

Managing identity and access across partitions requires careful consideration of authentication patterns, permission management, and operational complexity. Organizations face four primary approaches based on their identity infrastructure maturity and external system dependencies.

Infrastructure as Code: The Foundation for Multi-Partition Identity Management

Infrastructure as Code is absolutely critical for managing IAM Identity Center across multiple partitions without configuration drift. Manual console-based configuration of permission sets, account assignments, and user/group mappings quickly becomes operationally unsustainable and introduces significant security risks when scaled across partitions.

Preventing Configuration Drift: IaC templates ensure identical identity configurations deploy across partitions, with version control tracking all permission set changes and automated drift detection identifying deviations between partitions. This eliminates the manual errors that commonly occur when administrators configure identity settings separately in each partition.

Permission Set Consistency: Template-driven permission set deployments guarantee that access controls remain consistent across partitions, enabling security teams to verify permissions through code review rather than partition-by-partition inspection. Policy-as-code approaches embed access requirements directly into infrastructure definitions.

Account Assignment Automation: Automated account assignment workflows ensure users receive consistent access across appropriate partitions based on their role and data classification requirements. Templates can deploy conditional assignments based on partition-specific service availability or compliance requirements.

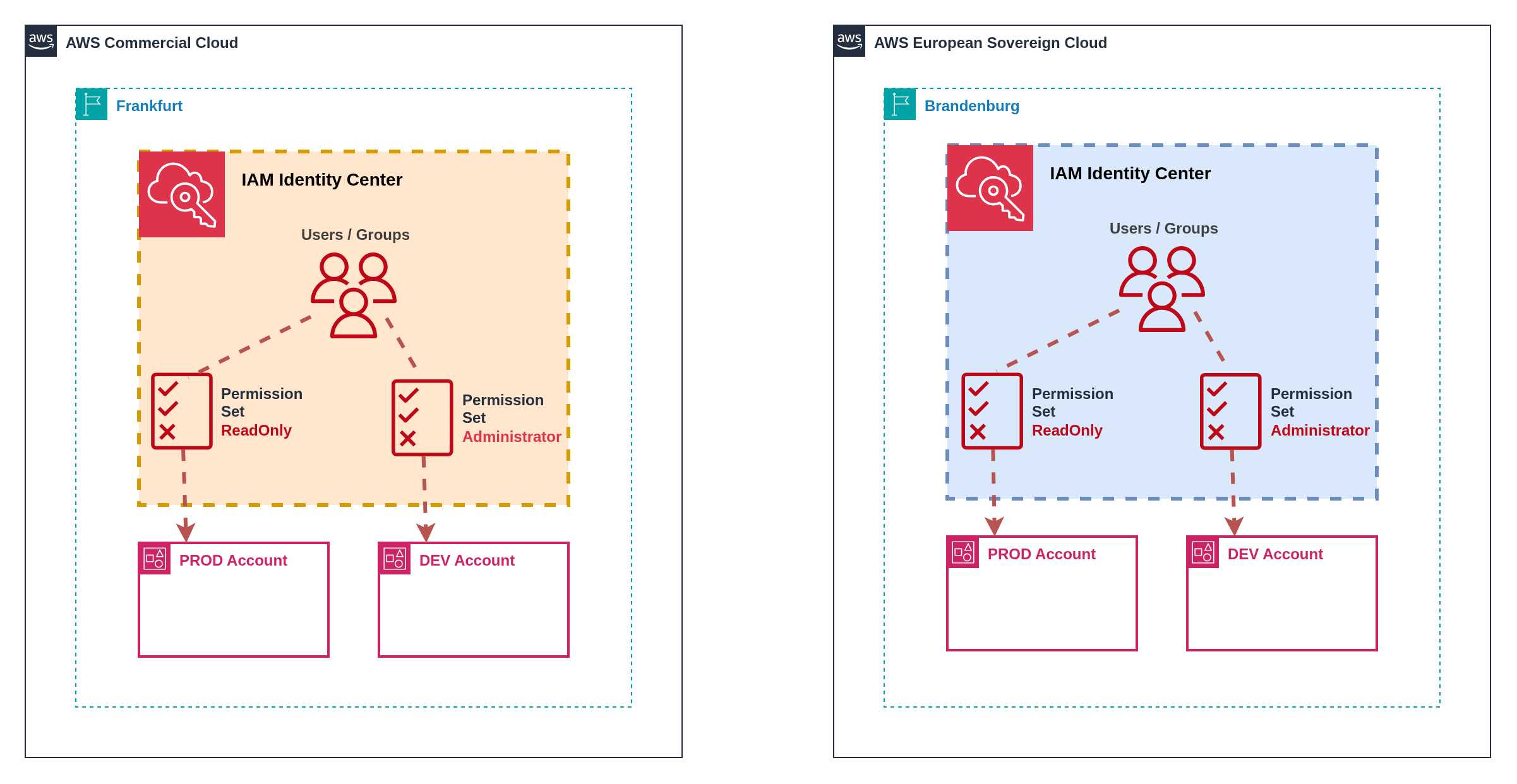

Pattern 1 Independent Identity Centers Without External IdP

Figure 10: Independent Identity Centers with separate user stores

Figure 10: Independent Identity Centers with separate user stores

Organizations without external identity providers such as Microsoft Entra ID or Okta must maintain separate IAM Identity Center instances with independent user stores across partitions. This approach provides maximum simplicity but introduces significant operational overhead and user experience challenges.

Implementation: Deploy IAM Identity Center in each partition with separate user directories. Users must register separately in each partition, set independent passwords, and configure separate multi-factor authentication devices. Each partition provides distinct access portal URLs requiring separate bookmark management.

User Experience: Users maintain separate credentials for each partition and must remember which applications reside in which partition. Password policies, MFA requirements, and account lifecycle management operate independently.

Operational Considerations: Identity administrators must maintain separate user directories, duplicate password reset procedures, and manage independent MFA device registrations. User onboarding requires separate account creation workflows for each partition where access is required.

Security Implications: Independent MFA devices reduce security correlation across partitions but provide stronger isolation if one partition is compromised. Password complexity and rotation policies can differ between partitions unless explicitly standardized through operational procedures.

Independent identity centers create significant operational challenges:

Duplicate User Management: Every user requiring access to both partitions needs separate account creation, password management, and MFA configuration. This doubles administrative overhead and increases the likelihood of configuration inconsistencies.

Inconsistent Access Patterns: Users may have different permission levels across partitions due to separate assignment processes, creating security risks and compliance challenges. Manual synchronization of access rights becomes error-prone at scale.

Support Fragmentation: Password resets, account lockouts, and MFA issues require partition-specific support procedures. Help desk teams need training on multiple systems and access to separate administrative consoles.

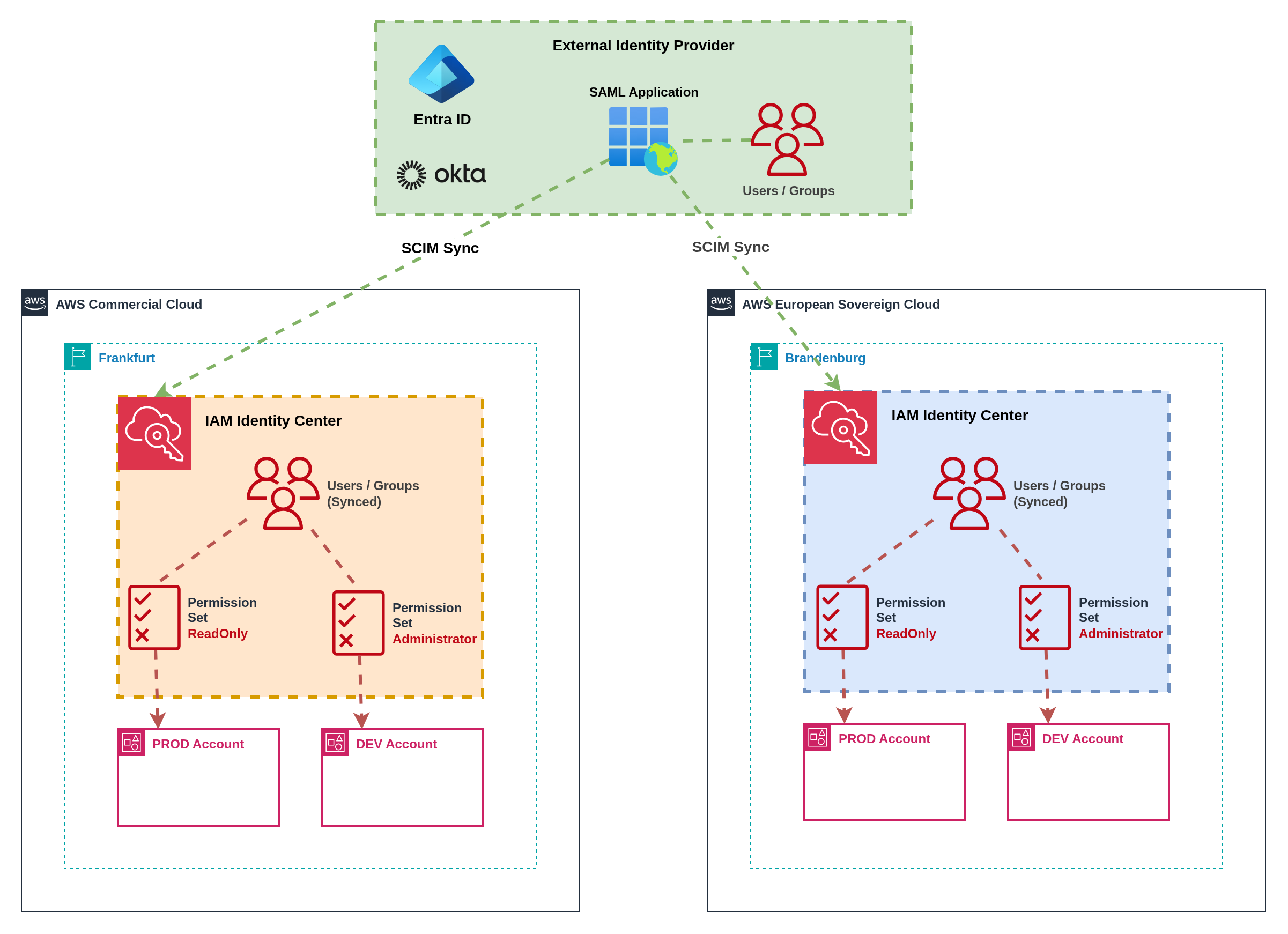

Pattern 2 Identity Centers with External IdP Integration

Figure 11: External IdP integration with SCIM synchronization

Figure 11: External IdP integration with SCIM synchronization

External identity providers such as Microsoft Entra ID or Okta serve as the authoritative source for user identities and group memberships across partitions. SCIM (System for Cross-domain Identity Management) protocols synchronize user and group information to IAM Identity Center instances in both partitions.

Implementation: Configure SCIM provisioning from external IdP to IAM Identity Center instances in both partitions. Users and groups synchronize automatically, maintaining consistent identity representation whilst preserving partition isolation. External IdP provides centralized authentication with partition-specific authorization.

User Experience: Users access a centralized application portal (such as myapps.microsoft.com, Okta dashboard, or corporate intranet) containing links to partition-specific AWS access portals. Single sign-on (SSO) provides seamless authentication to both partitions with partition-aware application tiles.

Permission Management: Permission sets replicate across partitions with partition-specific adaptations for service availability differences. Attribute-based access control (ABAC) patterns use user attributes and group memberships to determine access rights across partitions. Role-based access control (RBAC) provides structured permission hierarchies that adapt to partition capabilities.

Group-Based Access Control: External IdP groups control access to specific partitions and permission levels. Groups like "ESC-Developers" and "Commercial-Admins" provide granular control over who can access which partition with what permissions. Group membership changes in the external IdP automatically propagate to appropriate partitions via SCIM.

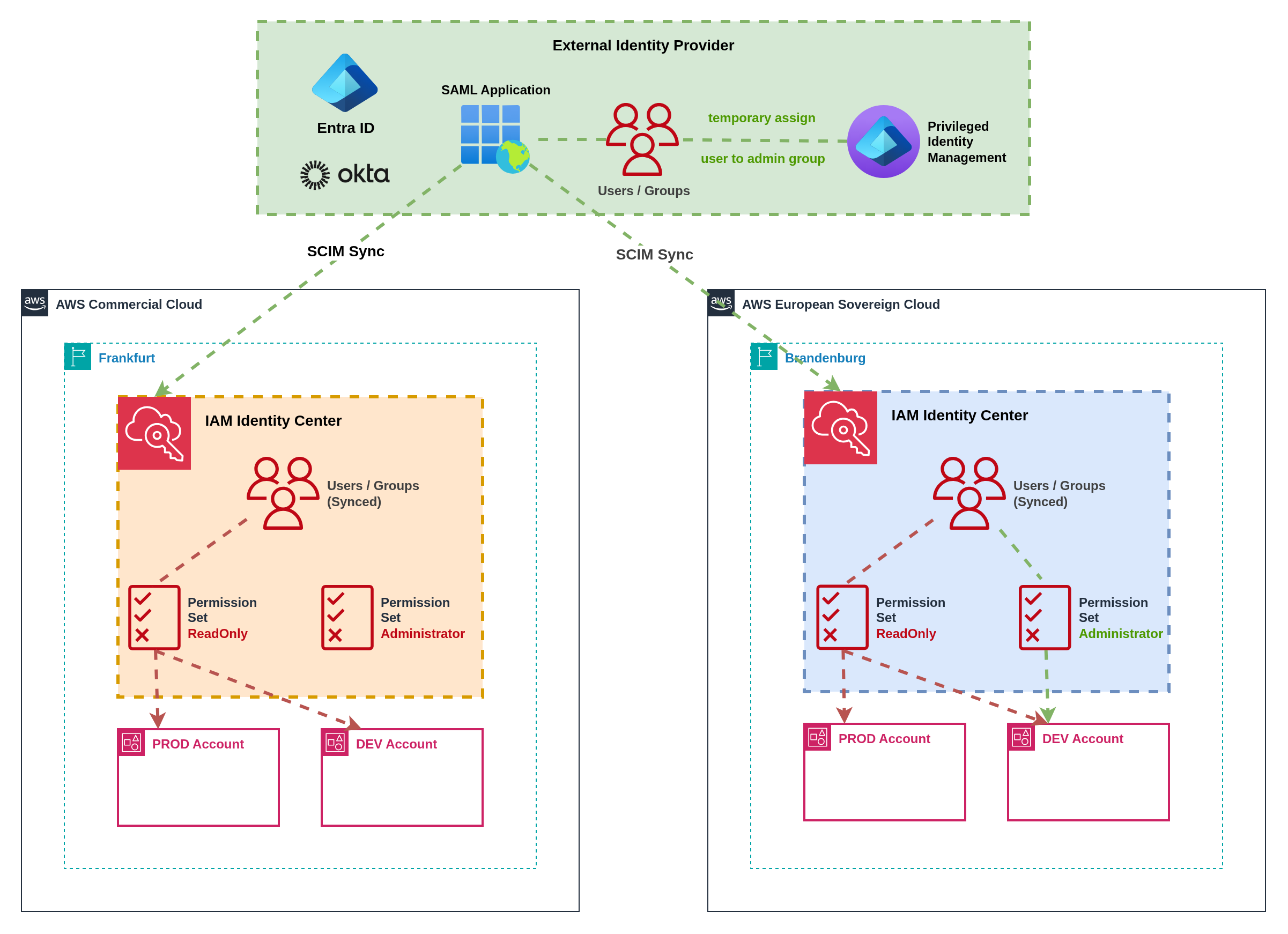

Pattern 3 External IdP with Just-in-Time Elevated Access

Figure 12: Just-in-time elevated access through external IdP automation

Figure 12: Just-in-time elevated access through external IdP automation

Organizations requiring temporary elevated permissions can implement just-in-time (JIT) access patterns through external IdP automation. This approach provides time-bound administrative access with appropriate approval workflows and audit trails across partitions.

Implementation: Custom automation workflows temporarily add users to privileged groups in the external IdP, triggering SCIM synchronization to grant elevated permissions in target partitions. Approval workflows gate access requests with time-bound assignments that automatically expire. Integration with ticketing systems provides audit trails and business justification.

Workflow Process: Users request elevated access through self-service portals or API integrations, specifying justification, duration, and target partition(s). Approval workflows route requests to appropriate managers or security teams. Upon approval, automation temporarily adds users to privileged IdP groups, enabling elevated access that automatically expires at the specified time.

Cross-Partition Coordination: Automation workflows can grant elevated access across multiple partitions simultaneously or independently based on request requirements. Permission elevation in ESC may require separate approval workflows due to sovereignty requirements, whilst commercial partition access follows standard procedures.

Audit and Compliance: All elevation requests, approvals, and access grants generate comprehensive audit logs across external IdP and AWS CloudTrail. Time-bound access ensures privileged permissions don't persist beyond business requirements, reducing security exposure and simplifying compliance reporting.

AWS TEAM (Temporary Elevated Access Management) is a custom AWS solution for just-in-time access management. However, AWS TEAM does not support multi-partition environments and must be deployed independently in each partition where elevated access is required.

Multi-Partition Implications: Organizations using AWS TEAM must:

- Deploy separate TEAM instances per partition with independent workflows

- Maintain separate approval processes and audit trails

- Train administrators on partition-specific TEAM operations

- Accept operational overhead of managing multiple TEAM deployments

Alternative Approaches: External IdP automation or third-party JIT solutions provide better multi-partition support with unified workflows and centralized audit trails.

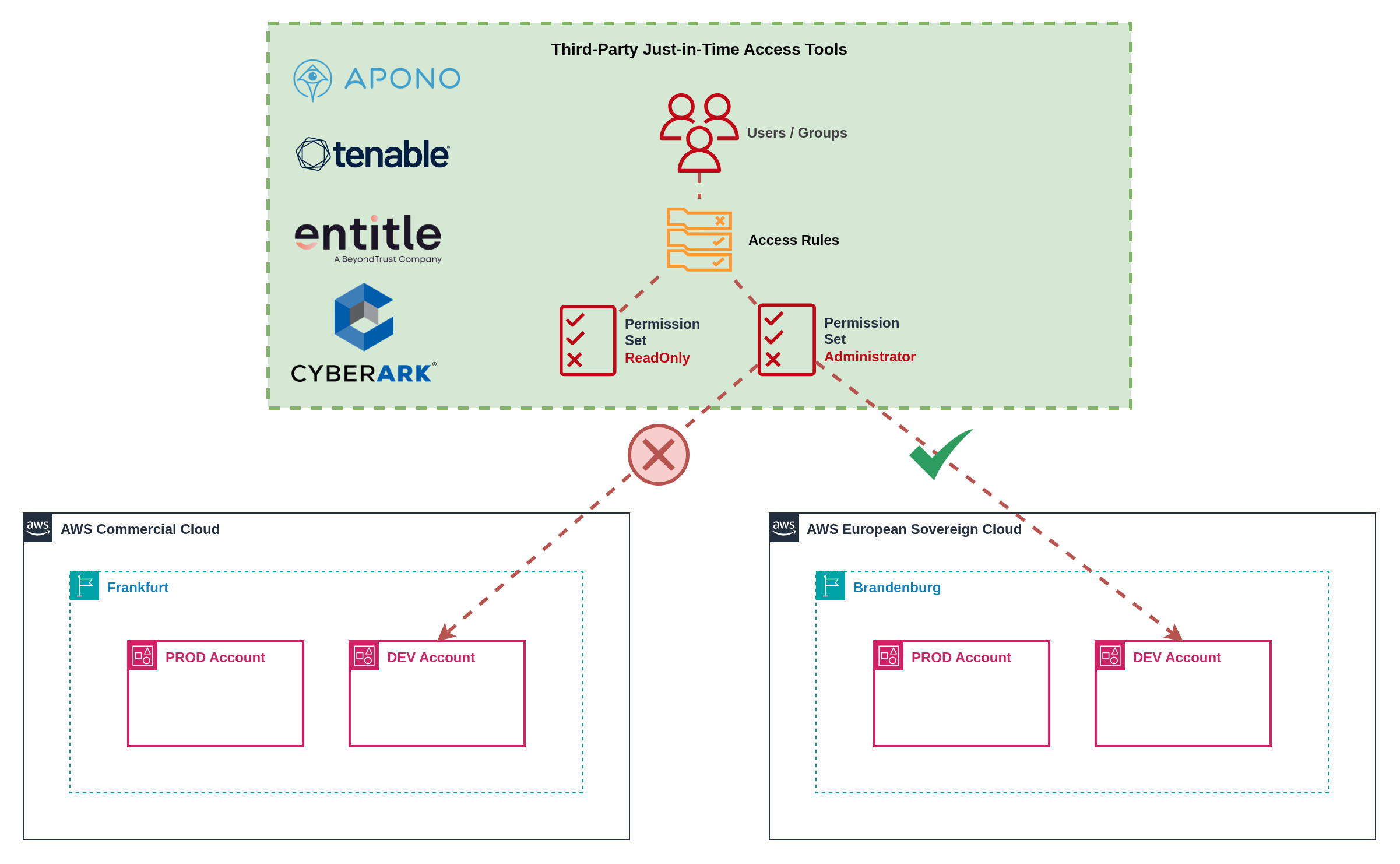

Pattern 4 Third-Party Just-in-Time Access Tools

Figure 13: Third-party just-in-time access management platform

Figure 13: Third-party just-in-time access management platform

Specialized third-party tools like Apono, CyberArk, Tenable, or Entitle provide advanced just-in-time access management with single entry points and sophisticated approval workflows. These platforms offer unified access request interfaces whilst handling partition-specific integration requirements.

Implementation: Deploy third-party JIT platforms with custom integrations to each AWS partition. Platforms provide centralized request interfaces with approval workflows that can route to partition-specific approvers. Integration APIs handle permission elevation across multiple partitions through a single user interface.

Advanced Features: Third-party tools often provide sophisticated features including risk-based access decisions, session recording, privileged account discovery, and automated access reviews. Integration with identity governance platforms enables comprehensive access certification and compliance reporting.

Single Pane of Glass: Users submit access requests through unified interfaces without needing to understand partition boundaries. Administrative teams manage approval workflows, policies, and compliance reporting through centralized dashboards that span multiple AWS partitions and cloud providers.

Custom Integration Requirements: Each third-party tool requires custom integration development to support multiple AWS partitions effectively. Integration complexity varies significantly between platforms, with some providing built-in multi-cloud support whilst others require extensive customization.

ESC Compatibility: Third-party identity and access management tools are expected to support AWS European Sovereign Cloud following general availability, but support is not guaranteed from day zero.

Expected Challenges:

- Integration Development: Vendors need time to develop and test ESC-specific integrations

- API Compatibility: ESC-specific endpoints and authentication patterns may require platform updates

- Feature Parity: Initial ESC support may lack feature parity with commercial AWS integrations

- Certification Timelines: Security certifications and compliance validations may delay ESC support

Planning Recommendations: Organizations should engage with identity platform vendors early to understand ESC roadmaps and support timelines. Plan for potential delays or limitations in third-party tool support during ESC early adoption phases.

Multi-Partition Identity Pattern Comparison

| Pattern | Best For | Pros | Cons | User Experience | Complexity | Cost Profile |

|---|---|---|---|---|---|---|

| 1. Independent Identity Centers | Small organizations without external IdP infrastructure, maximum partition isolation requirements | ✅ Maximum partition isolation ✅ No external dependencies ✅ Simple initial setup ✅ AWS-native authentication ✅ No additional licensing | ❌ Duplicate user management overhead ❌ Inconsistent access patterns ❌ Multiple credential sets per user ❌ Fragmented support procedures ❌ Manual synchronization required | Poor Multiple logins, passwords, MFA devices | Medium Dual administration | Low No additional licensing costs; High operational overhead |

| 2. External IdP Integration | Organizations with existing IdP infrastructure, need for consistent identity management, SCIM provisioning capabilities | ✅ Centralized identity management ✅ Automatic user/group synchronization ✅ Consistent permission models ✅ Single authentication source ✅ Familiar user experience | ❌ External IdP dependency ❌ SCIM configuration complexity ❌ Potential sync delays ❌ Additional licensing costs ❌ Permission set replication overhead | Good Centralized portal with partition links | Medium SCIM configuration & maintenance | Medium External IdP licensing; SCIM provisioning costs |

| 3. External IdP JIT Access | Organizations requiring temporary elevated permissions, strong audit requirements, time-bound administrative access | ✅ Time-bound elevated access ✅ Comprehensive audit trails ✅ Automated approval workflows ✅ Centralized request interface ✅ Cross-partition coordination | ❌ Custom automation development ❌ Complex approval workflow design ❌ External IdP dependency ❌ Potential sync delays for elevation ❌ Limited to IdP group-based permissions | Good Self-service elevation requests | High Custom automation & workflows | Medium-High External IdP licensing; Custom development costs |

| 4. Third-Party JIT Tools | Large enterprises with complex access requirements, advanced audit needs, multi-cloud environments | ✅ Advanced JIT capabilities ✅ Unified access request interface ✅ Sophisticated approval workflows ✅ Session recording & monitoring ✅ Multi-cloud platform support | ❌ Additional platform licensing ❌ Custom integration development ❌ Vendor dependency ❌ Potential ESC support delays ❌ Complex platform management | Excellent Single interface for all access requests | High Platform integration & management | High Third-party platform licensing; Integration development costs |

Summary:

- Identity management complexity increases significantly across partitions without external IdP integration

- External IdP integration provides the best balance of user experience and operational efficiency

- Just-in-time access patterns require careful design but significantly improve security posture

- Infrastructure as Code is essential for maintaining consistent identity configurations across partitions

- Third-party tool support for ESC may be delayed, requiring fallback planning for early adoption

9.4 Security and Compliance

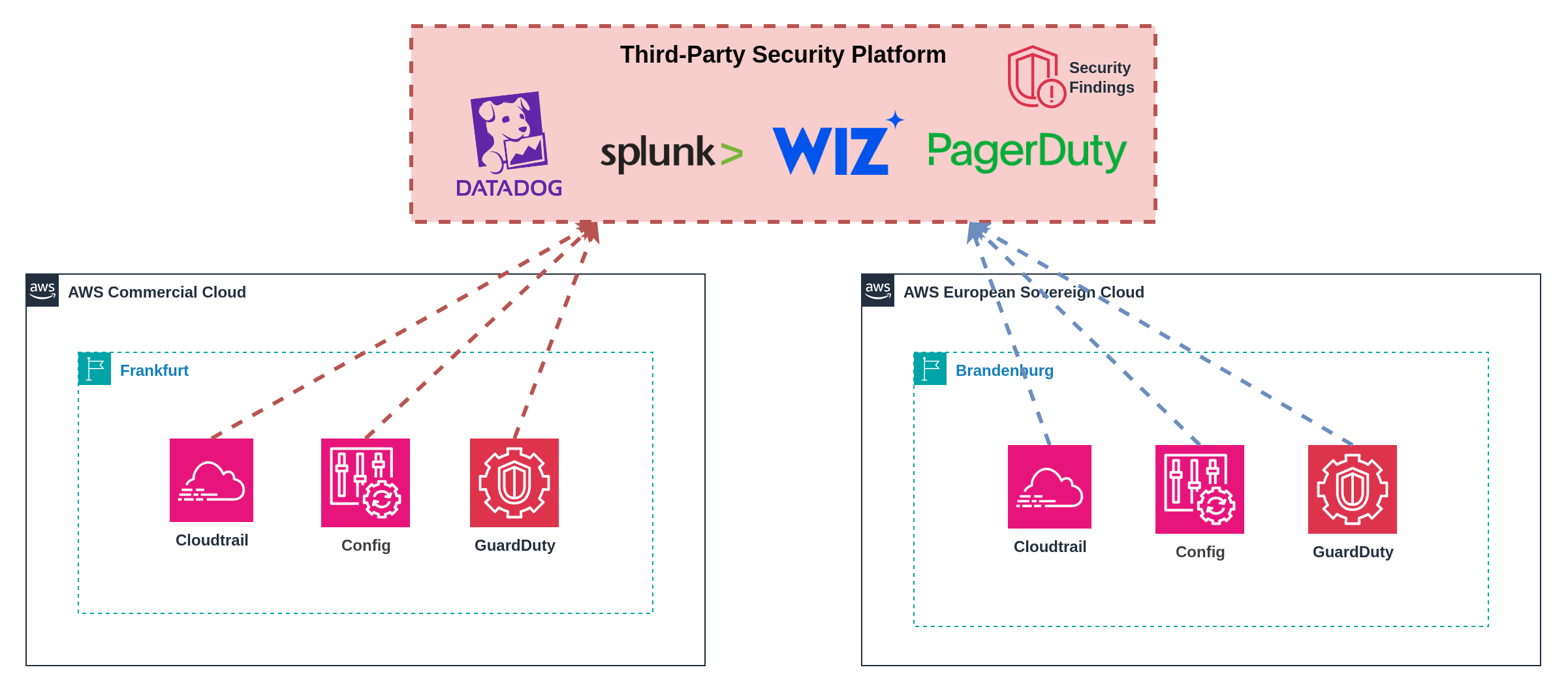

Maintaining consistent security posture across partitions whilst respecting sovereignty boundaries requires partition‑aware security architectures and compliant telemetry handling. Organizations face three primary approaches based on their operational preferences, sovereignty requirements, and existing security infrastructure investments.

Infrastructure as Code: The Foundation for Multi-Partition Security Management

Infrastructure as Code is absolutely critical for achieving multi-partition security and compliance parity whilst preventing configuration drift. Without IaC, maintaining consistent security postures across partitions becomes operationally unsustainable and introduces significant compliance risks.

Preventing Configuration Drift: IaC templates ensure identical security configurations deploy across partitions, with version control tracking all changes and automated drift detection identifying deviations. This eliminates the manual configuration errors that commonly occur when managing multiple environments through console-based administration.

Compliance Parity Assurance: Template-driven deployments guarantee that compliance controls remain consistent across partitions, enabling auditors to verify control implementation through code review rather than environment-by-environment inspection. Policy-as-code approaches embed compliance requirements directly into infrastructure definitions.

Partition-Aware Templates: Use conditional logic and feature flags to adapt to service availability differences between partitions. Templates can deploy alternative implementations based on the current partition, ensuring functional equivalence despite service parity gaps.

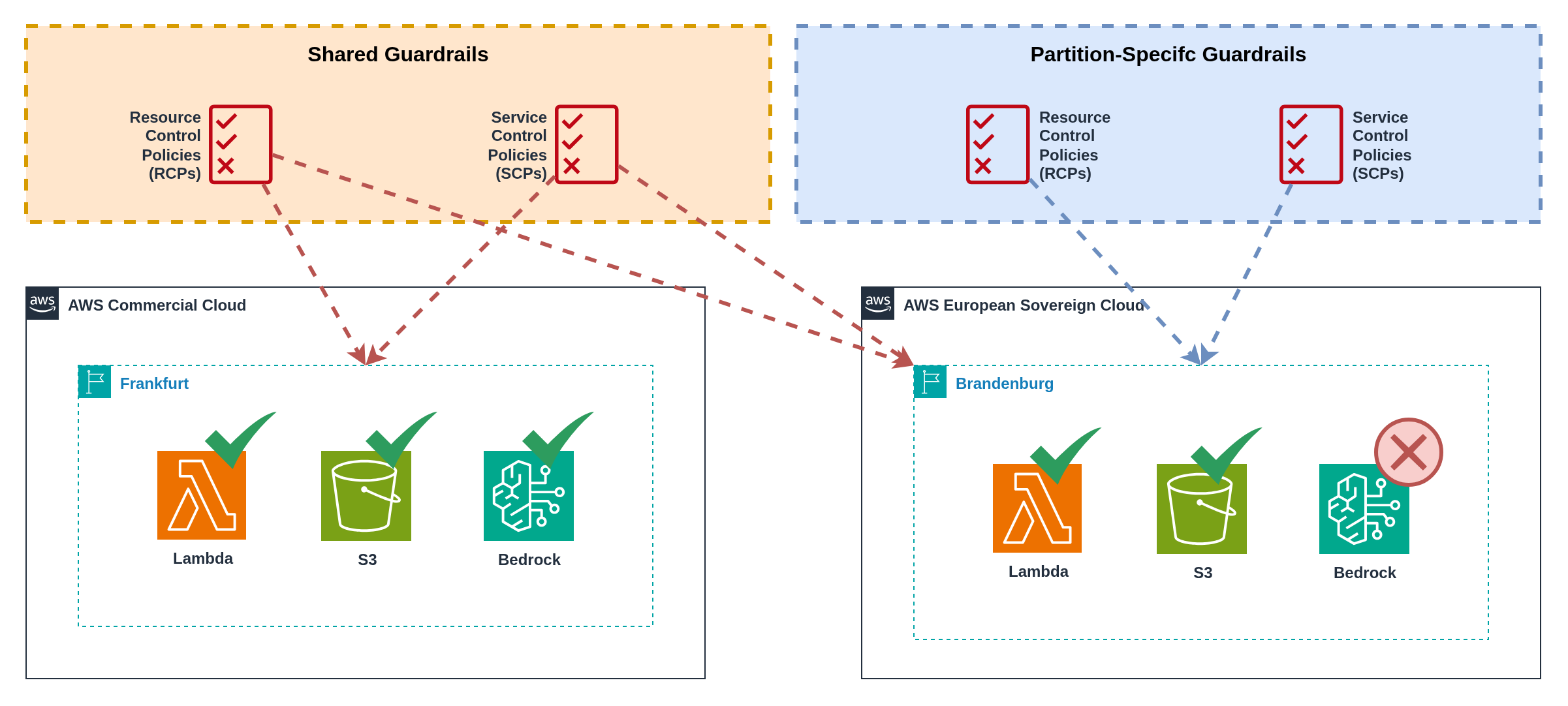

Guardrails Consistency: Shared guardrails include fundamental security controls such as root account restrictions, encryption requirements, network access limitations, and audit logging mandates. Partition-specific guardrails adapt to service availability, implementing alternative controls when services are unavailable or configuring partition‑aware resource restrictions.

Figure 14: Guardrails distribution across partitions

Figure 14: Guardrails distribution across partitions

Pattern 1 Separate Native AWS Security Operations

Figure 15: Independent security operations with partition-specific teams

Figure 15: Independent security operations with partition-specific teams

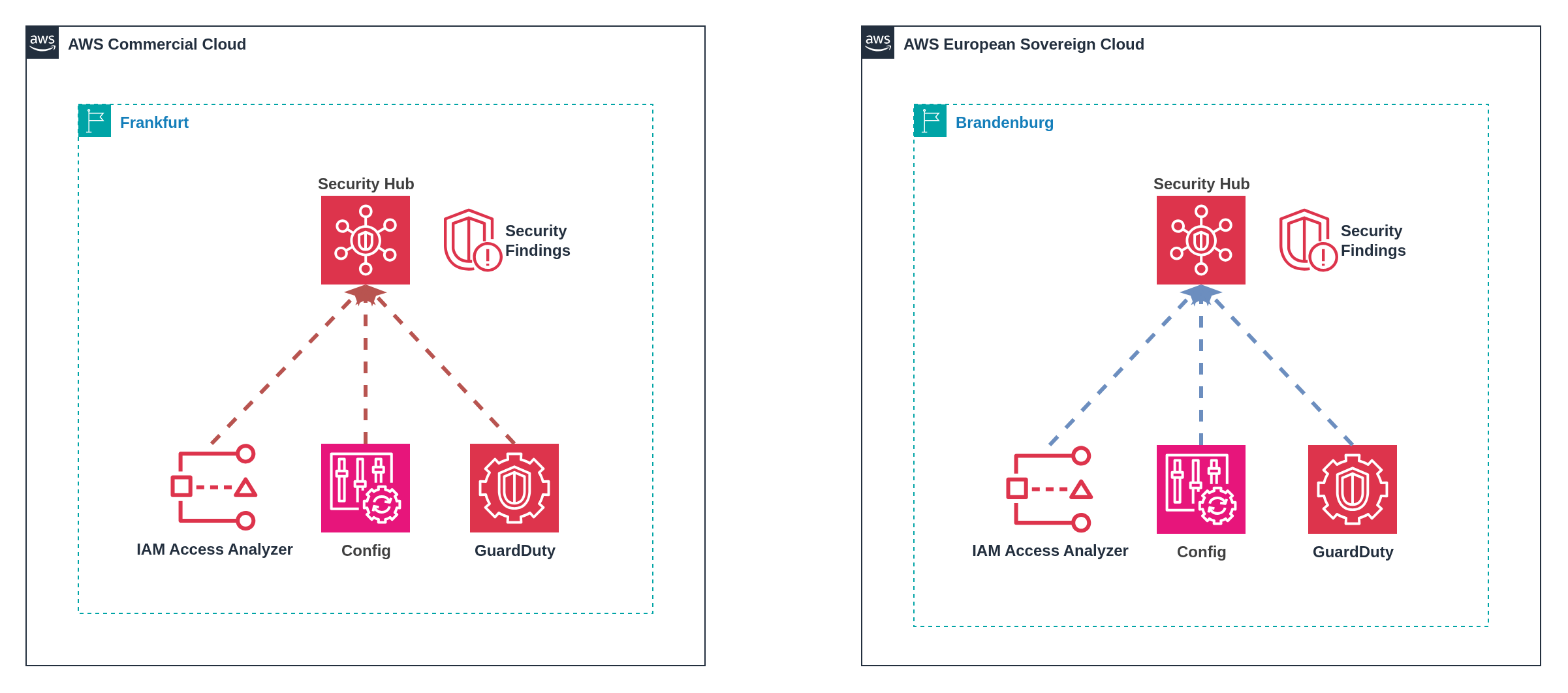

Operating Security Hub independently in each partition provides native AWS integration whilst maintaining clear sovereignty boundaries. Each partition operates its own security dashboard with partition-specific alerting and response procedures, ensuring ESC security events remain within EU governance domains.

Implementation: Deploy Security Hub, GuardDuty, Config, and other security services in both partitions with separate configuration and alerting. Security operations teams maintain partition-specific playbooks and escalation procedures, with alerts routing to appropriate regional or compliance-specific response teams.

Operational Model: Dedicated security teams or team members focus on specific partitions, developing deep expertise in partition-specific service availability and operational constraints. Incident response procedures accommodate AWS operational differences between partitions, particularly ESC's EU-resident support model.

Benefits: Complete sovereignty compliance with no cross-partition data movement, native AWS tooling integration without additional platform costs, clear operational boundaries aligned with compliance requirements, and simplified compliance auditing with partition-specific evidence trails.

Dashboard Management: Separate CloudWatch dashboards, Security Hub findings, and compliance reports maintain clear partition boundaries whilst requiring security analysts to monitor multiple interfaces. Custom tagging strategies help maintain context when switching between partition-specific tools.

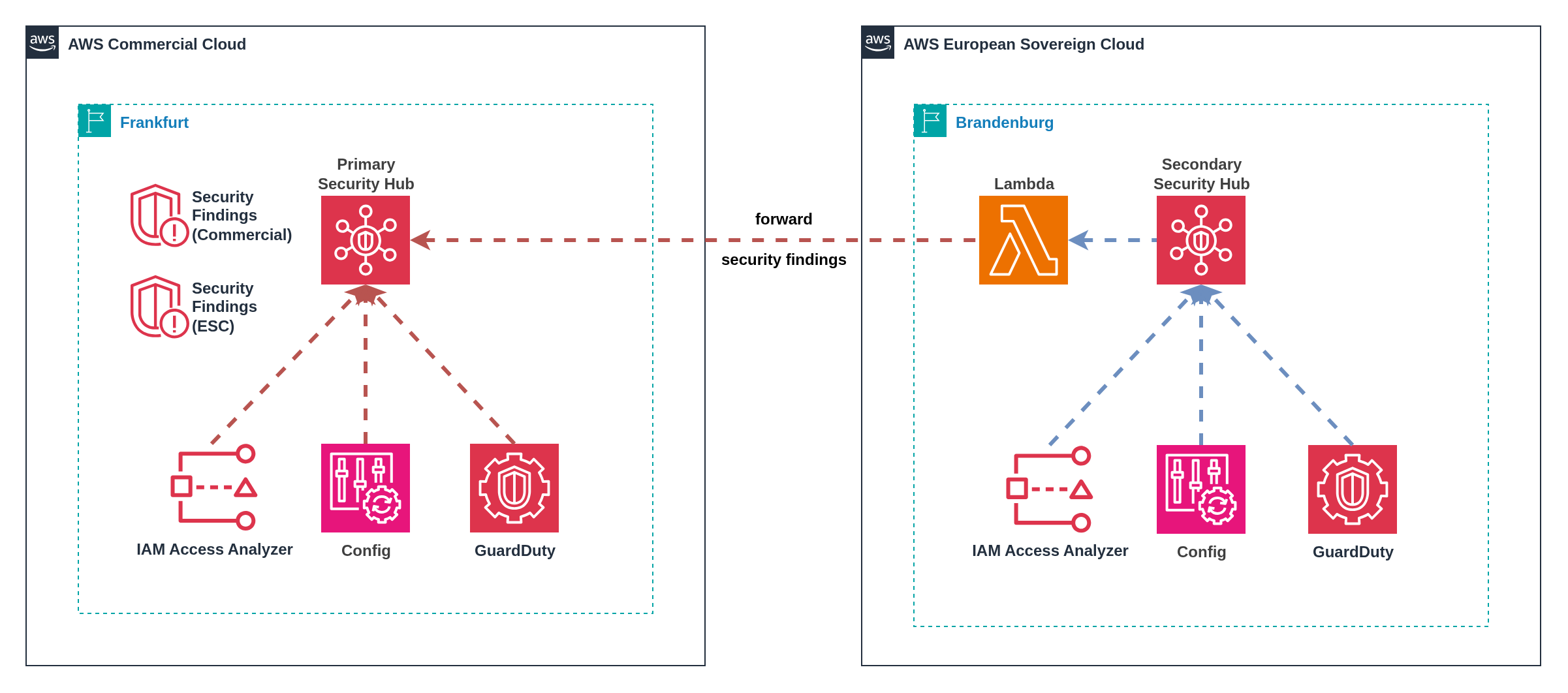

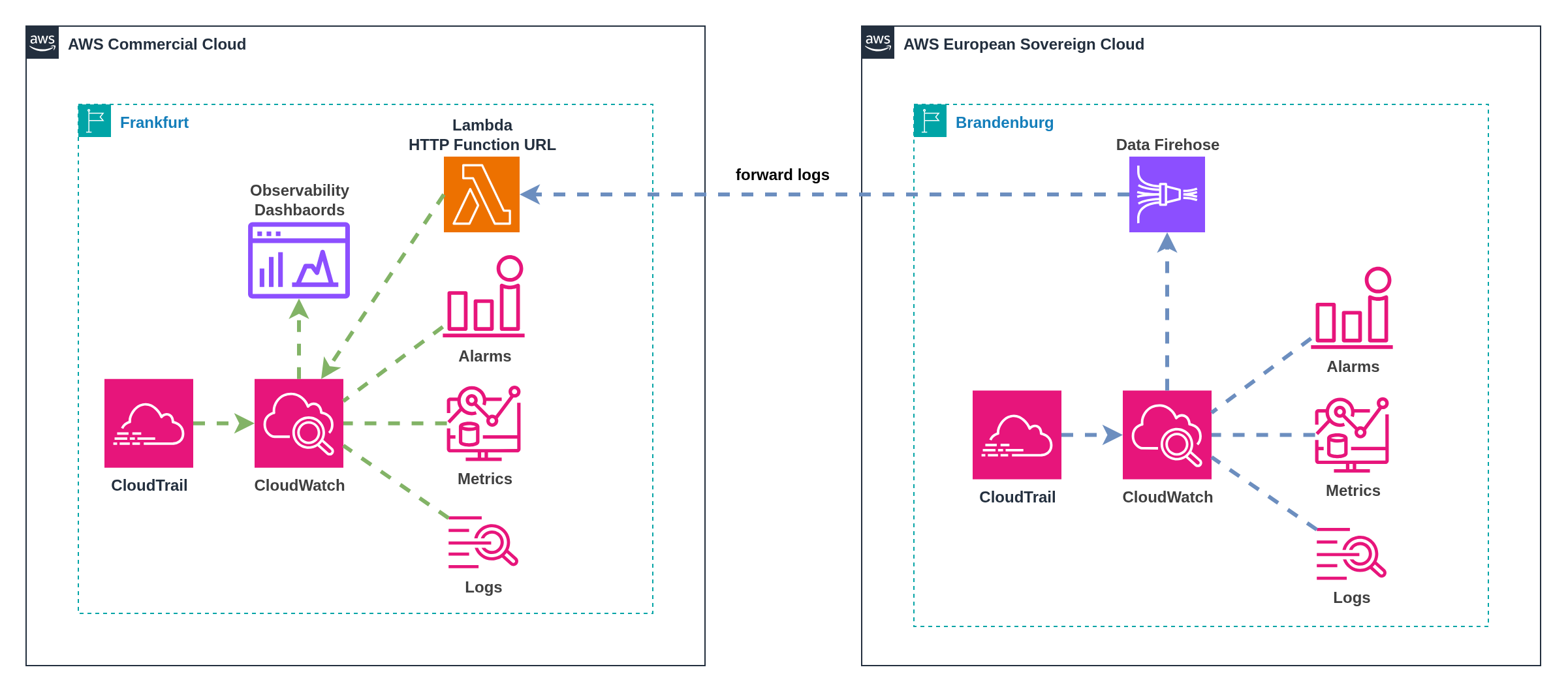

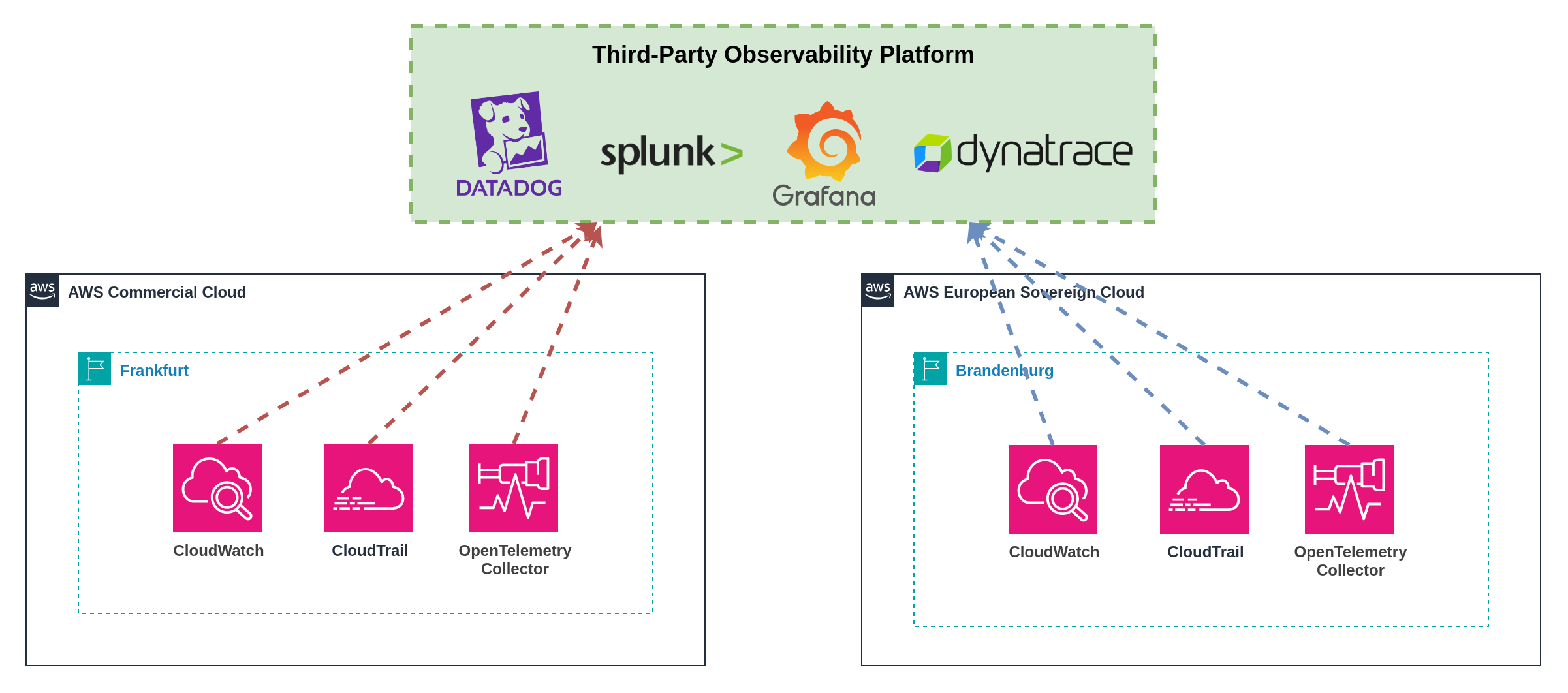

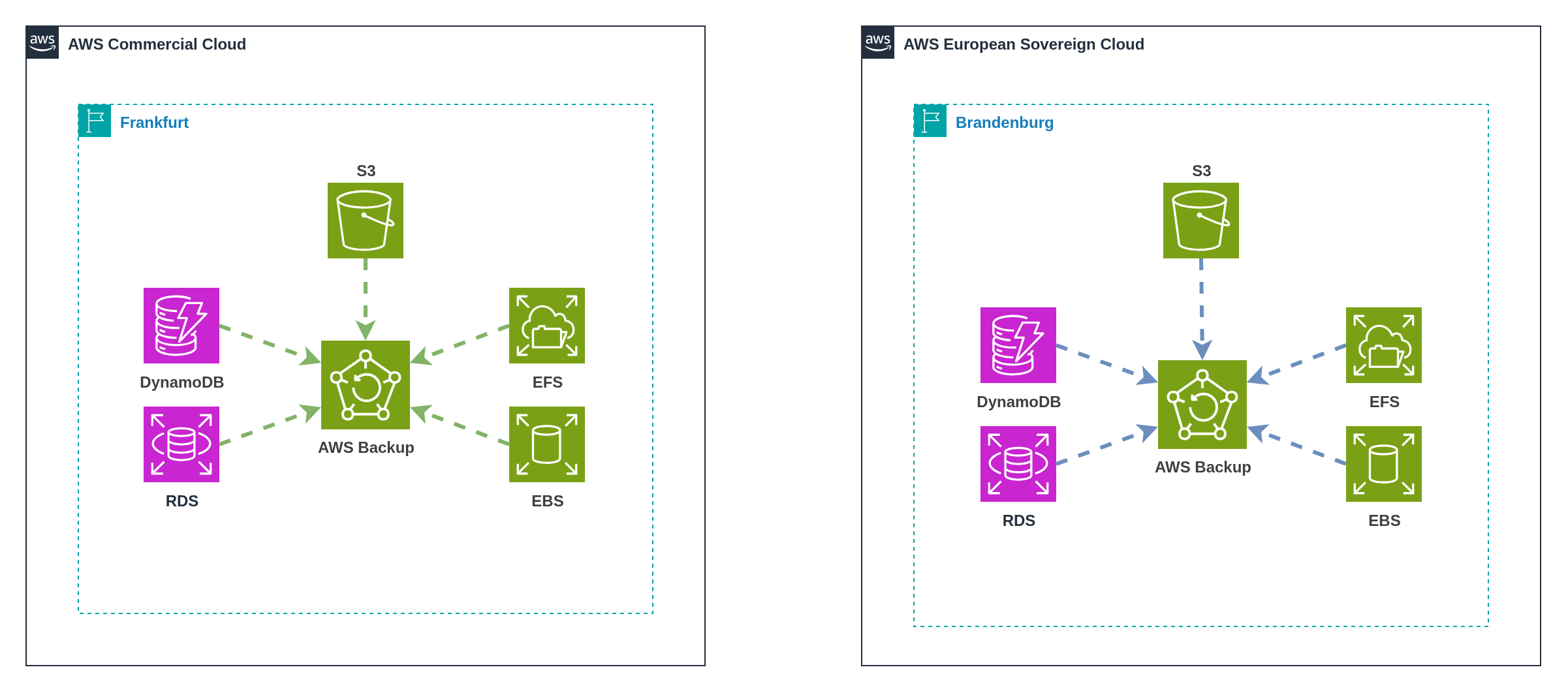

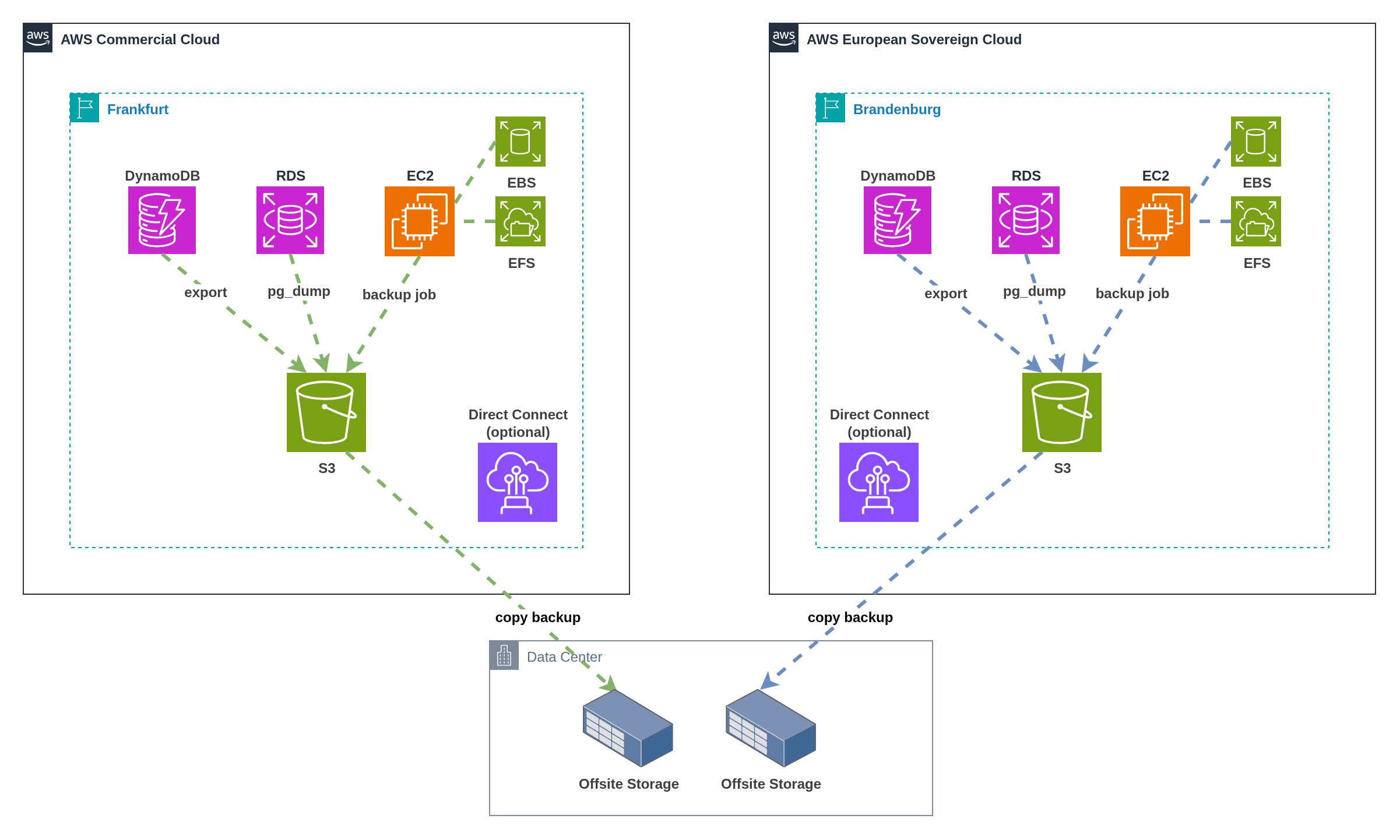

Pattern 2 Cross-Partition Security Hub Forwarding

Figure 16: Centralised Security Hub with cross-partition finding forwarding

Figure 16: Centralised Security Hub with cross-partition finding forwarding